One contention in my work is that the new, experimental microfinance impact studies are more reliable than the older, non-experimental ones, not to the individual success stories on microfinance web sites.A working paper by Ram Rajbanshi, Meng Huang, and Bruce Wydick speaks to this thesis in an interesting way. To whet your appetite for my pedagogic exegesis, here's a passage from the introduction:

...we find that just over two-thirds (68.3%) of the significant apparent impact observed by practitioners based on “before-and-after” observation of microfinance borrowers is illusory. Moreover, only 4 out of 13 of the impact variables that show significant impacts for borrowers after taking microfinance loans are significant in more rigorous...estimations.

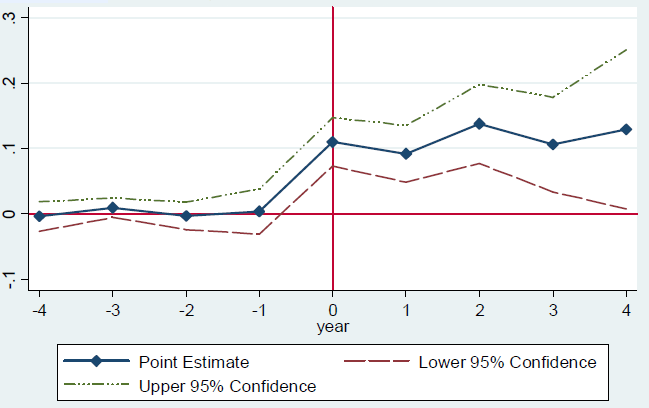

To reach this conclusion, the study applies two different evaluation methods to the same data, on a microfinance program in Nepal. The bad method compares how much people invest before and after they take loans. By definition, it looks just at those who take loans. This approach is analogous to microfinance practitioners observing and reporting that their clients routinely expand their businesses with the new loans, and prosper as a result. A big pitfall here is that a borrower may not approach the lender until she has an investment plan ready to roll, one which she may pursue with financing from many sources, and may pursue whether or not she gets the microloan. It is then misleading to attribute all of the investment to the availability of microfinance.The better method in the paper is a big improvement, though not as iron-clad as randomization. It looks at the uptick in investment among households as it relates not to when they take a microloan but when they first could do so. It asks not, "What did microfinance loan do for my investment?" but "What did the arrival of microfinance in my village do for my investment?" And, it compares the answers in villages where microfinance became available early, to nearby, similar ones in which microfinance became available several years later. The idea here is that within each pair of villages in the study---one where microfinance arrived early and a nearby one where it arrived late---the decision of the lender, Jeevan Bikas Samaj, about when microfinance became available in each village was arbitrary, equivalent to a flip of a coin. So it is much more meaningful to examine the sequelae of that apparently arbitrary event. In contrast, when someone actually decides to take a loan, as just discussed, is a much less arbitrary event.Now, how Jeevan Bikas Samaj actually decided which villages to do microfinance first is not examined. And this is the key assumption that you must accept or reject in deciding whether to trust the more-rigorous evaluation approach in this study. The worry is that the decision wasn't actually arbitrary, but was made on the basis of some hidden characteristics of the villages chosen first that also led families in those villages to be better off. This would create the illusion of impact.One unusual aspect of the study its use of retrospective data. Surveys visited subjects only once, but asked them to think back over 2001--10 to recall when major events---investments---occurred. In this way, the surveyors constructed histories. As World Bank economist Martin Ravallion recently showed, retrospective data can be unreliable. Care to recall, without the use of paper records, how much money you spent in 2005? But recognizing this, the authors focus on a few questions for which they think recall is most reliable: unusual, major events that either happened or didn't, such as buying a cow or a piece of land. "When it was difficult for respondents to determine the year of an event, enumerators used ages of children, births and deaths of family members, years of important cricket matches, and other key benchmarks in the household history to pinpoint the year in which the event took place."The study's results are captured in a couple of graphs. This one shows the results of the naive before-after method. In particular, it shows that in the four years before a household took a loan, there was on average no change in the probability of it making a major investment. But in the year the loan is taken (year 0), the probability jumped by 0.1, or 10%. It stayed around that level in the next four years. In the graph, the blue line shows the frequency of making this major investment, by year. The red and green lines bracket the "confidence intervals" around the blue to reflect the margin of error in the survey. This one shows the result of the more rigorous method, done with reference to when loans became available rather than when they were taken, and compared to control groups. Now, after the onset of credit availability, marked as year 0, investment does not climb as fast, nor perhaps as high:

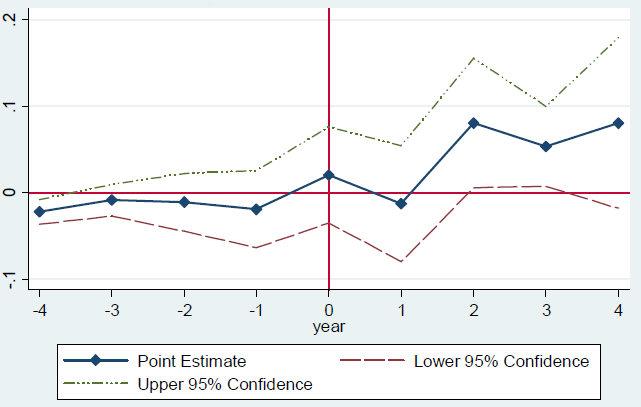

This one shows the result of the more rigorous method, done with reference to when loans became available rather than when they were taken, and compared to control groups. Now, after the onset of credit availability, marked as year 0, investment does not climb as fast, nor perhaps as high: Comparing the high trajectory for the bad method and the lower one for the better method, the authors reach that key finding: two thirds of the difference between the optimistic assessments by microfinance practitioners and more sour ones of the randomized studies is caused by bias inherent in the naive before-after comparisons of the practitioners. The authors go even further--but here I don't find them so convincing. They submit that the remaining one-third of the discrepancy is caused by a bias built into most of the randomized studies. Why? By the "law" of diminishing returns, the impact of the nth lender to arrive in an area, is less than that of the earlier arrivals. So if you evaluate just the nth one, you're likely to underestimate the total impact of microcredit. In the Hyderabad study, for example, Spandana was the latest of many institutions to lend in the city. Maybe the first to bring credit to the city's poor did them much more good. In contrast, in this study, Jeevan Bikas Samaj is the area's first microcreditor. This explains, the authors assert, why its impacts are more positive.The idea is entirely plausible. But it has three weaknesses. First, as the authors acknowledge (top of page 19), sometimes returns to capital increase. The law of diminishing returns isn't really a law; this is economics, not physics. Maybe a $100 loan isn't enough to start a microenterprise, but if you can get to $200, through a second loan, then you're in business. Abhijit Banerjee suggests that the returns to capital for microentrepreneurs are a staircase. Thus the nth lender could make a bigger difference than those who come before. Second, whereas the paper has evidence to back the assertion that two thirds of the discrepancy owes to biases inherent in naive before-after evaluation, it has no evidence that the remaining third is caused by bias inherent in the randomized studies. There is only a prediction from a simple theoretical model. Third, it's not clear that there's a discrepancy anyway. Like this Nepal study, the randomized ones tend to find that microcredit stimulates investment. That includes the Morocco trial which also looks at the impacts of the first microfinance institution in an area rather than the "nth" one.So, interestingly, while I don't trust this study quite as much as the randomized ones, it does produce similar results for the one kind of outcome studied, investment. And it has the advantage of looking at impacts over 4 years instead of 12--18 months. And its side-by-side comparison of two evaluation methods is an instructive lesson in the merit of rigor.Note: The classic in this literature is LaLonde 1986.

Comparing the high trajectory for the bad method and the lower one for the better method, the authors reach that key finding: two thirds of the difference between the optimistic assessments by microfinance practitioners and more sour ones of the randomized studies is caused by bias inherent in the naive before-after comparisons of the practitioners. The authors go even further--but here I don't find them so convincing. They submit that the remaining one-third of the discrepancy is caused by a bias built into most of the randomized studies. Why? By the "law" of diminishing returns, the impact of the nth lender to arrive in an area, is less than that of the earlier arrivals. So if you evaluate just the nth one, you're likely to underestimate the total impact of microcredit. In the Hyderabad study, for example, Spandana was the latest of many institutions to lend in the city. Maybe the first to bring credit to the city's poor did them much more good. In contrast, in this study, Jeevan Bikas Samaj is the area's first microcreditor. This explains, the authors assert, why its impacts are more positive.The idea is entirely plausible. But it has three weaknesses. First, as the authors acknowledge (top of page 19), sometimes returns to capital increase. The law of diminishing returns isn't really a law; this is economics, not physics. Maybe a $100 loan isn't enough to start a microenterprise, but if you can get to $200, through a second loan, then you're in business. Abhijit Banerjee suggests that the returns to capital for microentrepreneurs are a staircase. Thus the nth lender could make a bigger difference than those who come before. Second, whereas the paper has evidence to back the assertion that two thirds of the discrepancy owes to biases inherent in naive before-after evaluation, it has no evidence that the remaining third is caused by bias inherent in the randomized studies. There is only a prediction from a simple theoretical model. Third, it's not clear that there's a discrepancy anyway. Like this Nepal study, the randomized ones tend to find that microcredit stimulates investment. That includes the Morocco trial which also looks at the impacts of the first microfinance institution in an area rather than the "nth" one.So, interestingly, while I don't trust this study quite as much as the randomized ones, it does produce similar results for the one kind of outcome studied, investment. And it has the advantage of looking at impacts over 4 years instead of 12--18 months. And its side-by-side comparison of two evaluation methods is an instructive lesson in the merit of rigor.Note: The classic in this literature is LaLonde 1986.

Topics

DISCLAIMER & PERMISSIONS

CGD's publications reflect the views of the authors, drawing on prior research and experience in their areas of expertise. CGD is a nonpartisan, independent organization and does not take institutional positions. You may use and disseminate CGD's publications under these conditions.