Recommended

PISA 2018 results were released today. 79 countries and 600,000 students took part in the seventh triennial round of the highly scrutinized tests which assess the skills and knowledge of 15-year-olds in maths, reading, and science. Here are a few quick reactions from the edu-data enthusiasts here at CGD.

1. Just a handful of countries improved significantly since they first participated in PISA

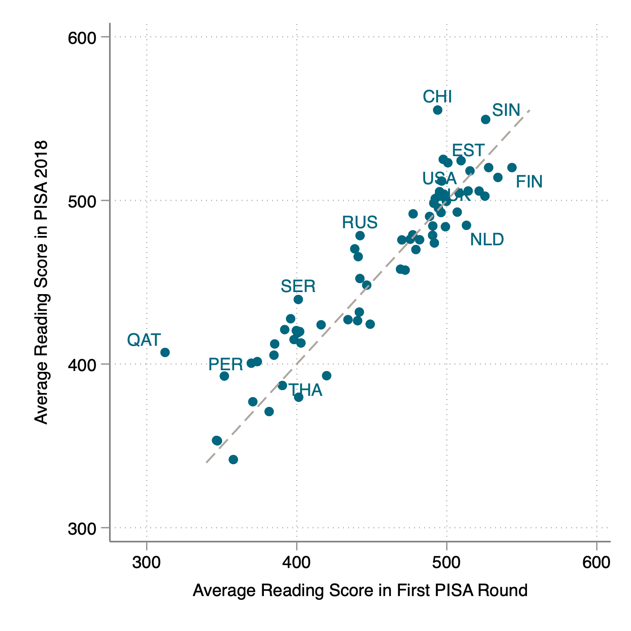

A stark message from PISA 2018 is that very few countries have achieved significant improvements in performance since they first joined PISA. Seventy countries participated in PISA 2018 and at least one round before then. Comparing their progress since they first joined and 2018 allows us to see whether any have improved or declined significantly over the last almost-twenty years. Figure 1 shows most countries remained stable, with a disappointingly small number of outliers achieving significant improvement. More encouragingly, there are just a handful of countries that show a significant decline in performance.

Figure 1. Performance from first participation in PISA to 2018

Source: authors analysis of PISA data

Note: In this figure we label only the furthest outliers. Thailand, USA, Finland, Netherlands, and Russia entered PISA in 2003; Estonia, Serbia, UK, and Qatar in 2006; Peru and Singapore in 2009, and Beijing-Shanghai-Jiangsu-Guangdong (China) in 2015. In 2018 Guangdong province was replaced with Zhejiang. All other (unlabelled) countries in the figure entered in the first round in 2000.

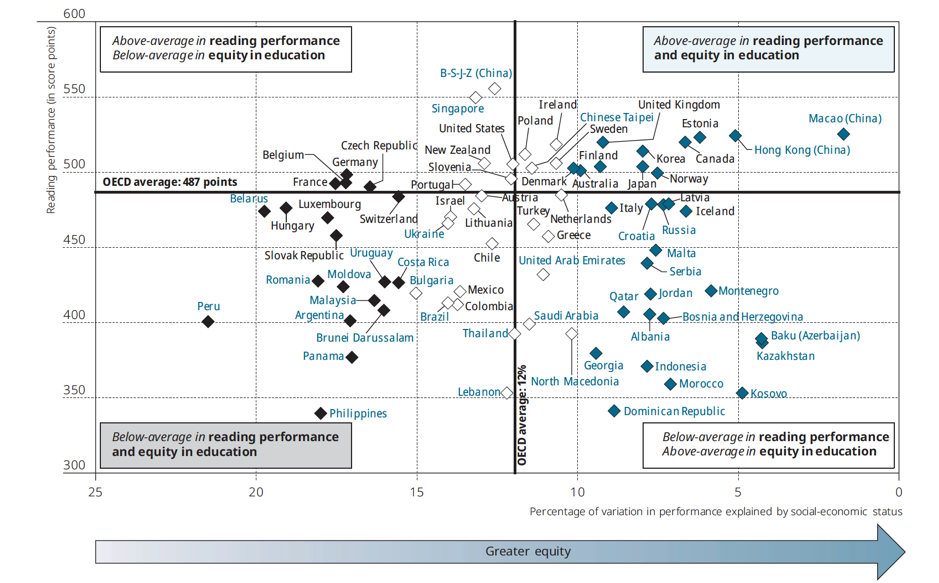

Importantly, this data masks differences in equity. Figure 2 shows that some countries, including Poland and Estonia—outliers in terms of absolute performance—have also achieved high levels of equity.

Figure 2. Reading performance and equity

Source: PISA 2018 Volume 2, Page 60.

2. Something is going on with “star performer” Vietnam.

Vietnam is a lower-middle income country with a GDP per capita of just $2,500. Yet in 2015, Vietnam was ranked 8th in science, with a point score that was 16 points higher than the United Kingdom and 29 points higher than the United States. In 2012 it ranked 16th in maths and 18th in reading, again ahead of both the US and the UK and higher than any other developing country. Students in Vietnam sat the 2018 PISA tests but the data is not included in reports that compare performance with other countries. It looks like the OECD PISA team needs more time to look into this issue. They say, “whatever its causes, the statistical uniqueness of Vietnam’s response data implies that performance in Vietnam cannot be validly reported on the same PISA scale as performance in other countries.”

Questions have been raised previously about Vietnam’s stellar performance. Nic Spaull noted that more 15-year-olds were excluded from the PISA sampling frame in Vietnam than in any other country: Around about half of all 15-year-olds are not represented by the PISA sample in the country. Paul Glewwe looked at Vietnam’s PISA success back in 2012. He found that, were the PISA sample adjusted to make it more representative of socio-economic status of 15-year-old students in the 2012 Vietnam Household Living Standards Survey, it would yield lower test scores. He further found that, since Vietnam has the third lowest school enrollment rate of the countries that participated in PISA 2012, a comparison that focuses on the top 50 percent of the entire population of 15-year-olds—i.e. including those not enrolled in school who one could assume would be in the bottom 50 percent—would greatly reduce Vietnam’s rank among the 63 PISA countries to 40th in maths and 41st in reading. But even with these adjustments, Vietnam remains a positive outlier in absolute scores conditional on its low level of GDP.

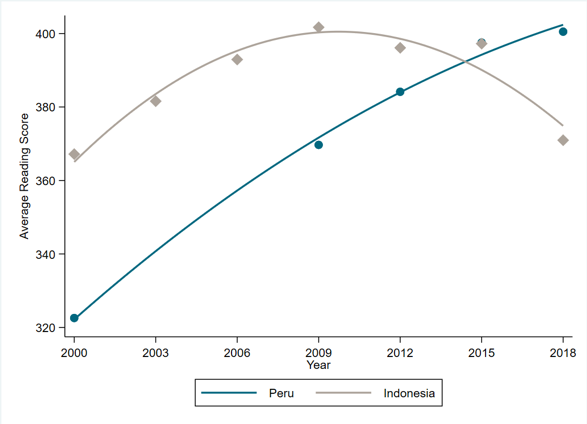

3. PISA data has some limitations for countries without full secondary enrolment.

Indonesia and Peru are two developing countries that do participate in PISA and, as the chart above shows, have shown some improvement in their scores over the last two decades, albeit from a low starting point and with a recent decline for Indonesia. However, PISA only surveys children currently enrolled in secondary school—a limitation for countries that have not achieved universal access to secondary education. DHS and MICS surveys do the exact opposite: They do not collect data on literacy for individuals who have reached secondary level or more as they are assumed to be literate—in other words, they measure the literacy rates of those who are not sampled by PISA surveys. The lack of inclusion of these students in PISA is a limiting factor to the usefulness of PISA, particularly to compare performance across developing countries.

CGD researchers Alexis Le Nestour and Justin Sandefur are using DHS and MICS data to reconstruct long trends of expected literacy at grade 5. Since DHS and MICS surveys collect information on women aged 15 to 49, data from older women can be used to estimate the progress of schooling systems over a longer period of time. PISA provides good insight into the education levels of those within the secondary school system, and we hope to use the MICS and DHS data to help complete the picture by providing insights into the efficiency of the schooling system for all children, not just for those who progressed to secondary school. Watch this space for that paper coming soon.

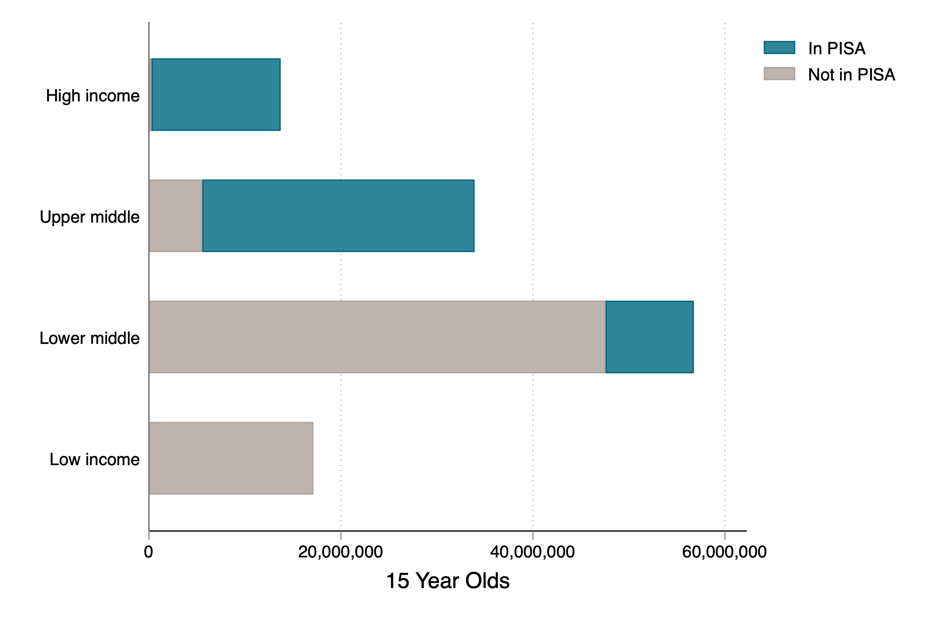

4. PISA is still a rich country game

PISA only covers about 42 percent of the world’s 15-year olds, and that’s with a somewhat heroic assumption that all Chinese students are sampled. A few new countries participated in PISA for the first time this year: Belarus, Bosnia and Herzegovina, Brunei Darussalam, Morocco, the Philippines, Saudi Arabia, and Ukraine. But PISA largely remains a rich(er) country club and more children are not covered by PISA than are. This should change a bit in 2021: In January 2019, the Indian government announced its plan to rejoin PISA after a 10-year absence. It dropped out in 2009 after being placed 72nd out of 74 nations. Algeria, Tunisia, Azerbaijan, and Mauritius are also among the group of countries who previously participated in PISA but did not in 2018.

What’s clear is that PISA doesn’t yet work for developing countries and there’s a big gap in the world coverage map. The World Bank’s “Harmonized Learning Outcomes” makes an effort to fill that gap, by linking PISA with other tests that are available for low income countries. But there are important weaknesses in the linking methodology. PISA doesn’t make its test questions open to other researchers, meaning that linking has to be done based on average national performance on totally different assessments of different subjects at different grades. CGD is working with J-PAL to join together different assessments based on individual questions, much as linking is done for PISA, but with the goal of producing an open-source bank of questions allowing anyone to design a test that be rigorously compared to an international scale. We hope that will allow for cross-country comparison for much more of the world.

5. A high profile PISA “shock” does not result in big improvement

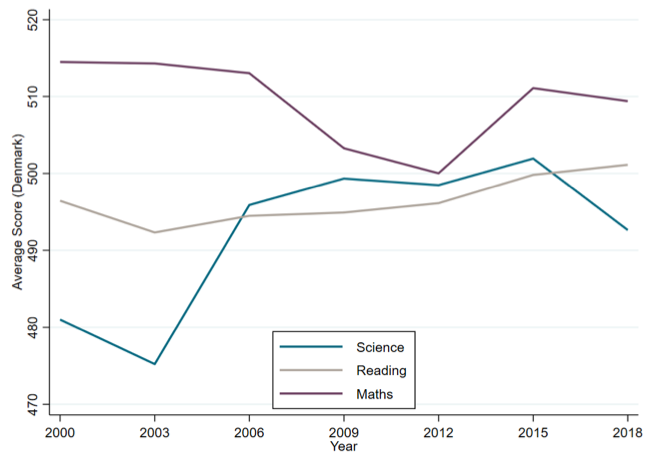

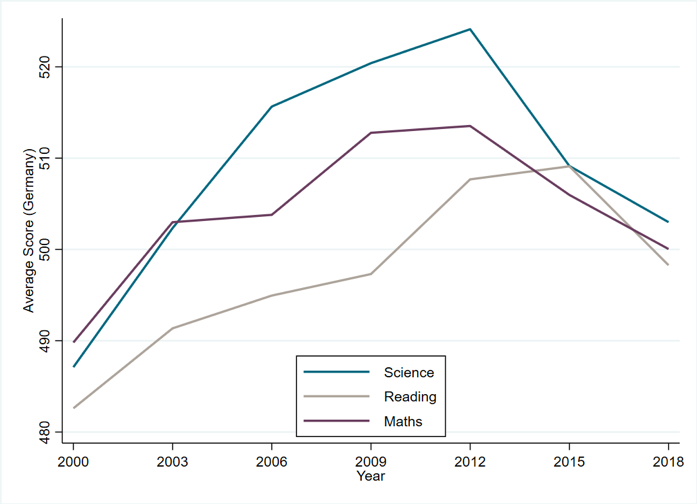

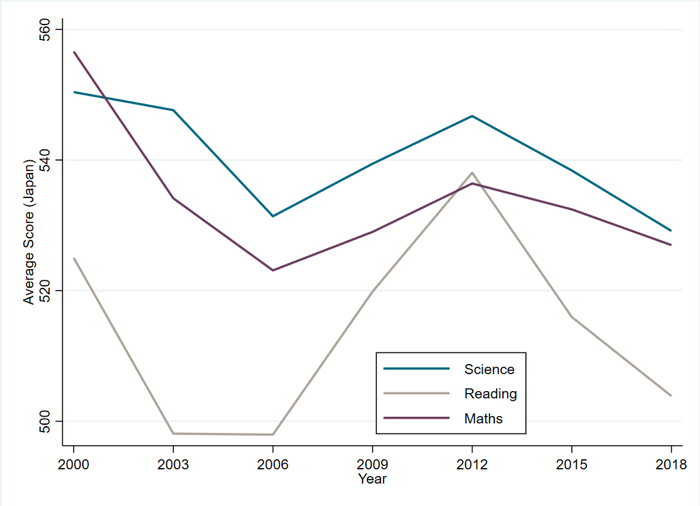

PISA is headline news today, but once the buzz has died down does a country’s ranking have any impact on education reforms? Denmark, Germany, and Japan experienced PISA “shocks” in the early years: a lower-than-expected performance in PISA 2000 for Denmark and Germany; and a performance drop in 2003 for Japan. The disappointing performance in Germany and Denmark, especially for students from disadvantaged or immigrant backgrounds, led to a swathe of reforms aiming to improve equity in their systems. In Japan their drop in performance was perceived as a “crisis” and resulted in the reversal of the contentious “low pressure” curriculum.

Did their subsequent reforms work? In 2000 Germany ranked 21st and 20th in reading and maths respectively. This round it ranked 20th for both and performed dismally on equity. In 2000 Denmark was 16th. for reading and 12th for maths. Today Denmark learned it came 18th in reading and 13th in maths, although does do better on equity measures. Japan was disappointed to drop in 2003 to 14th in reading and 6th in maths. This round it came 15th in reading and 6th in maths. And the absolute scores in all three countries tell a similar story: very little improvement and some decline.

Figure 5. Do PISA shocks improve PISA scores?

Source: authors analysis of PISA data

It’s too early to tell what the reaction will be to PISA 2018. Will Estonia be the new Finland, luring in delegations of education policymakers eager to learn from its edu-magic? Will Japan declare another education crisis after a further decline in PISA score and rank? Will questions continue to be asked about China’s performance? And will Vietnam’s data issues be resolved? What is clear is that, despite the talk of shock, crises, and Finnish schooling nirvana, most countries have not out- or under-performed since they joined PISA. As the PISA results day frenzy dies down, it’s worth remembering that no one country has got it all right and rushing to emulate “top-performers” is unlikely to yield fast results.

Topics

DISCLAIMER & PERMISSIONS

CGD's publications reflect the views of the authors, drawing on prior research and experience in their areas of expertise. CGD is a nonpartisan, independent organization and does not take institutional positions. You may use and disseminate CGD's publications under these conditions.