In 2007, the World Bank established the multi-donor Health Results Innovation Trust Fund (HRITF) to support and evaluate low-income country government efforts to pay providers based on their results in health care, with a focus on reproductive, maternal, newborn, child and adolescent health and nutrition. A decade later, the HRITF has had substantial impact on how governments and aid partners think and talk about health care financing, and the term “results-based financing” or RBF is now well-established in the policy vernacular.

Over this period, the HRITF also contributed to the development of the Global Financing Facility (GFF), a World Bank-based partnership which will be linked with ~$12 billion in financing from the International Development Association (IDA) and the International Bank for Reconstruction and Development (IBRD) to fund reproductive, maternal, newborn, child and adolescent health. While the GFF is not only about RBF, RBF remains an ingredient and it is a good moment to ask whether and how the HRITF approach has worked to improve coverage and health among its recipients.

We at CGD will be taking the opportunity of the HRITF’s 10th birthday to examine the impact of the HRITF RBF programs on outcomes, and the significance of the results for the GFF and the rest of global health aid. We are helped in this effort by a unique and important feature of the HRITF: its emphasis on using impact evaluations to inform the design and operation of the programs it supports. In contrast to other large global health funders such as the Global Fund and others, the HRITF and its donors insisted on the deployment of rigorous impact evaluations as a means to learn and to adjust programs, and to build momentum -where there were positive results—for the broader adoption of sometimes small-scale programs. A recent World Bank report provides a first overview of key lessons and knowledge gaps from one preliminary and seven completed impact evaluations in a range of countries.

The HRITF impact evaluation portfolio

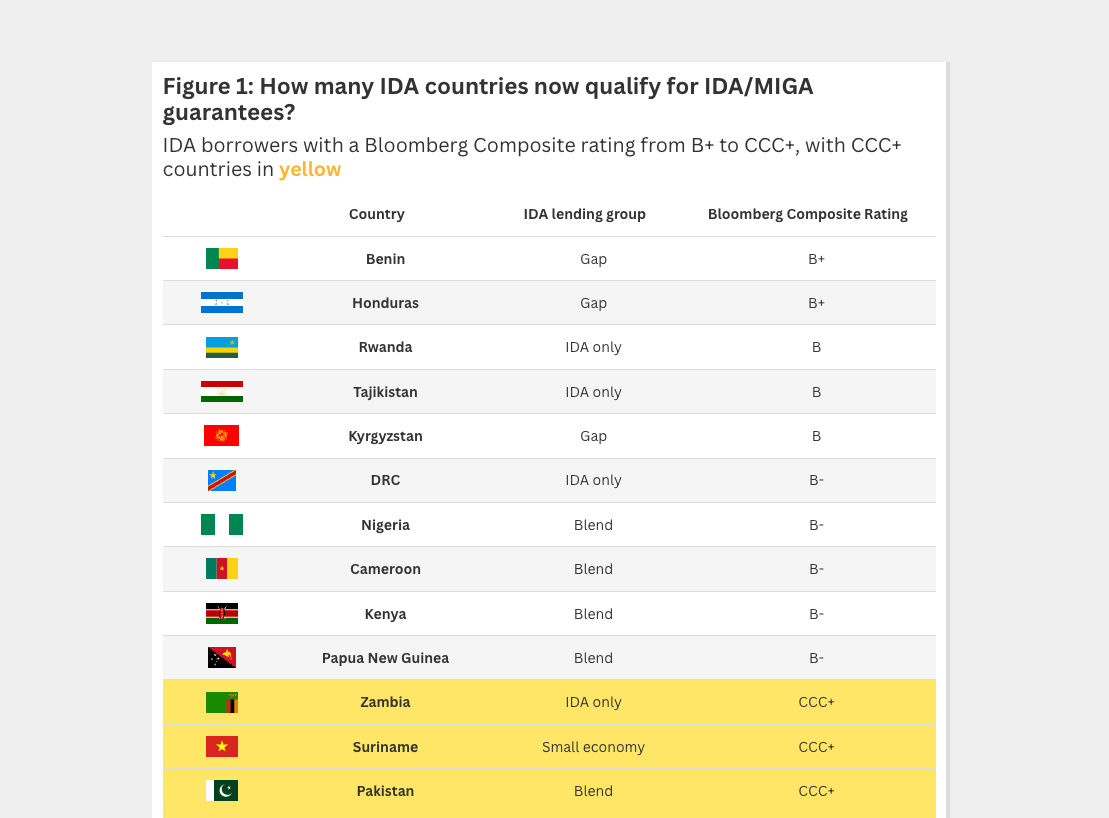

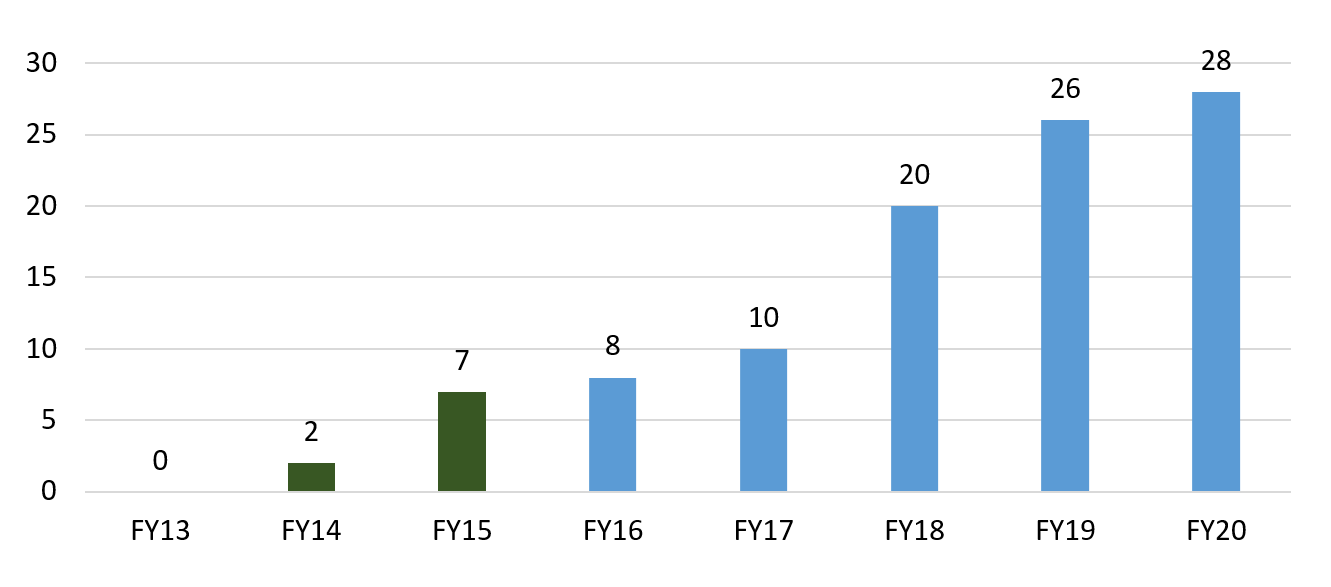

In total, the trust fund currently supports 35 programs and 33 impact evaluations, of which four are qualitative. Seven of the quantitative evaluations have been completed to date and 28 are expected to be ready by 2020. Eight additional countries are expected to disseminate results in 2018. Yes, a meta-analysis is planned.

The timeline for the evaluations reflects lags in approvals for evaluation studies and their designs, data collection and exposure. The HRITF’s impact evaluation timeline suggests that going from approved funding to approved design takes about one fiscal year. Going from approved design to baseline data takes about two fiscal years, also because the baseline data collection often occurs just before the operational launch of the RBF programs. An exposure period of 18-24 months means that evaluations are completed about two years later.

Lots already, more to come...

Cumulative count of completed RBF evaluations supported by HRITF

Fiscal years are July 1—June 30. Source: World Bank summary report.

Fiscal years are July 1—June 30. Source: World Bank summary report.

The RBF interventions evaluated thus far

The interventions and their evaluation studies differ in several ways. One reason is that RBF interventions are quite complex—they are more than just financial incentives for quantity and quality, but tend to involve increased supervision, routine measurement (made publicly available in some cases), and some provider autonomy in the spending of funds released when results are accomplished. Some key distinctions include:

Different intervention designs. The interventions differ in more or less subtle ways, and aim to influence both provider and patient behavior. For instance, the study on the DRC’s Haut-Katanga district incentivized only quantity measures on the supply side, while the Rwanda Community program offered incentives for both volunteer community health workers and patients (in separate and crossed study arms). Likewise, Zimbabwe accompanied payment incentives to providers with the abolition of user fees for those same services.

Multiple study arms. Many studies are designed to separate out the effects of various components of a RBF intervention. For instance, the Zambia evaluation has three study arms: RBF, unconditional financing, and business as usual. The Cameroon study has four arms to also assess the relative importance of supervision. This is incredibly useful as a means to understand the most cost-effective strategy to increase coverage and quality, and to establish attributable impact outcomes. (Cost-effectiveness studies are already available for Zambia and Zimbabwe.)

Various targeted entities and payout procedures. Providers varied; they might be primary care centers, hospitals, communities, provinces, and/or patients. Programs also differed in how bonuses could be allocated and made salient to individual providers, e.g., how a health center could allocate the bonus to individual workers (and how much of it). In Afghanistan, the health workers’ bonus was rolled into the regular paycheck and facility managers were tasked with communicating the bonus amounts.

Operational challenges. All new programs are difficult to implement and operate, especially when they’re just starting up. Alas, impact evaluations often focus on those challenging early implementation periods (e.g., making use of a staggered scale-up). The RBF evaluations had to contend with anything from providers having difficulty understanding the incentives (Afghanistan) to irregular payments of bonuses (DRC).

HRITF countries, targeted facilities and evaluation designs

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

Impact, success factors, and open questions

The World Bank’s report notes that, on the whole, these RBF programs improved service coverage and quality—at least on some of the rewarded indicators. And some evaluations report that these improvements did not come at the cost of lower quality of non-incentivized measures (one of Argentina’s evaluations did see a decrease in utilization by non-beneficiaries, but there was no effect on birth outcomes). Effects on worker motivation and client satisfaction seem to be mixed.

The report also discusses several enabling and disabling factors. For example, greater financial autonomy for facilities can improve resource allocation as in Argentina and Zimbabwe. Similarly, financial incentives offered to health workers need to be meaningful enough to encourage behavioral changes.

And finally, the report also highlights several questions that have only partially been answered by the available research. For example, how do RBF programs compare to other interventions (e.g. enhanced/unconditional financing)? In Zambia enhanced financing also generated improvements in health outcomes, and may have been more cost-effective (in terms of cost per quality adjusted life year) than the RBF. Two other questions—What is the effect of RBF on equitable access to high-quality care? How does the context in which financial incentives are provided affect the efficacy of different RBF programs?—remain largely unanswered thus far by the impact evaluations.

What else?

Additional lessons from the HRITF’s RBF programs will come to light over the next few years as more evaluations are completed and disseminated. In the meantime, here are four items we’re curious about and that could be explored with the existing data:

- How to interpret the reported effects? RBF programs often have 10-30 rewarded indicators, and may also (positively or negatively) affect unrewarded measures and health outcomes. Gauging the full impact of RBF requires assessing all of these to at least some degree. The rewarded indicators have gotten most of the attention thus far, but a systematic assessment of the unrewarded measures and outcomes across all evaluations could help the global health community to interpret and use the results.

- How to make sense of the different study arms and counterfactuals? As noted above, most evaluations try to disentangle the effect of various components of RBF. A systematic assessment of what we can learn from those variations for program design and implementation is essential.

- Which providers respond more or less, and what could be done to help laggards and challenge leaders? Understanding effect heterogeneity across providers seems key to design and evolve effective RBF programs and ancillary interventions.

- Related, what is the effect of RBF on equitable access to high-quality care, e.g., across geographies or different population groups?

So there’s lots more to learn from the available experience. Making more of the evaluation datasets available and making them available more quickly could bring in additional resources and ideas (heads up, PhD students!), and speed up the relatively slow pace of publication so far. Currently the World Bank’s microdata library features datasets for six countries (try keywords like HRITF or “Health Results-Based Financing”) but there are follow-up data for only one evaluation, the Rwanda Community intervention.

Stay tuned

Ultimately, we’d also like to understand whether and how the HRITF approach works “better” than other global health financing strategies. So over the next months, we’ll be working on a policy note using the available documentation of the HRITF’s completed evaluations to ask what else we’ve learnt and what else we hope to learn. Watch this space for more and send us an email if there’s something you’re curious about.

PS: if you’re interested in a broader assessment of the objectives and operational arrangements of the HRITF, try the 2012 Norad-commissioned evaluation report.

DISCLAIMER & PERMISSIONS

CGD's publications reflect the views of the authors, drawing on prior research and experience in their areas of expertise. CGD is a nonpartisan, independent organization and does not take institutional positions. You may use and disseminate CGD's publications under these conditions.