The 2014-2015 Ebola outbreak in West Africa was a disturbing demonstration of the inadequacy of international institutions to assist the affected peoples or learn how to better treat and prevent their illness. A new report from the National Academies of Science, Engineering, and Medicine (NASEM) makes the case that the next outbreak will be no better unless governments and non-governmental organizations can come together and agree on basic principles for “Integrating Clinical Research into Epidemic Response”—the title of the report.

Earlier this month, CGD hosted a presentation on the report by Gerald Keusch and David Peters, who chaired and served on the committee, respectively. Following the presentation, I (Mead) moderated a panel discussion with CGD’s Jeremy Konyndyk and Médecins Sans Frontières’ (MSF) Carrie Teicher, who discussed their experiences working on crisis response during the Ebola outbreak—and how we can do better.

Below, you can find our key takeaways from the event.

For certain diseases like Ebola, clinical trials can only be conducted during outbreaks

Few outbreaks require integrated research. As Carrie Teicher pointed out, outbreaks occur constantly. MSF is currently responding in various countries around the world to outbreaks of diseases such as cholera, measles, malaria or tuberculosis. Due to three key characteristics of Ebola, methods for treating or preventing it were almost completely unknown: (a) animal models of the disease are particularly unreliable, (b) the absence of effective treatment makes it unethical to study the disease by purposively infecting healthy human volunteers, and (c) there are no Ebola patients to study between outbreaks. Thus, the only way to develop and test improved therapeutics and vaccines for Ebola, or any other disease that shares these characteristics, is during an outbreak.

Research must be launched with breakneck speed

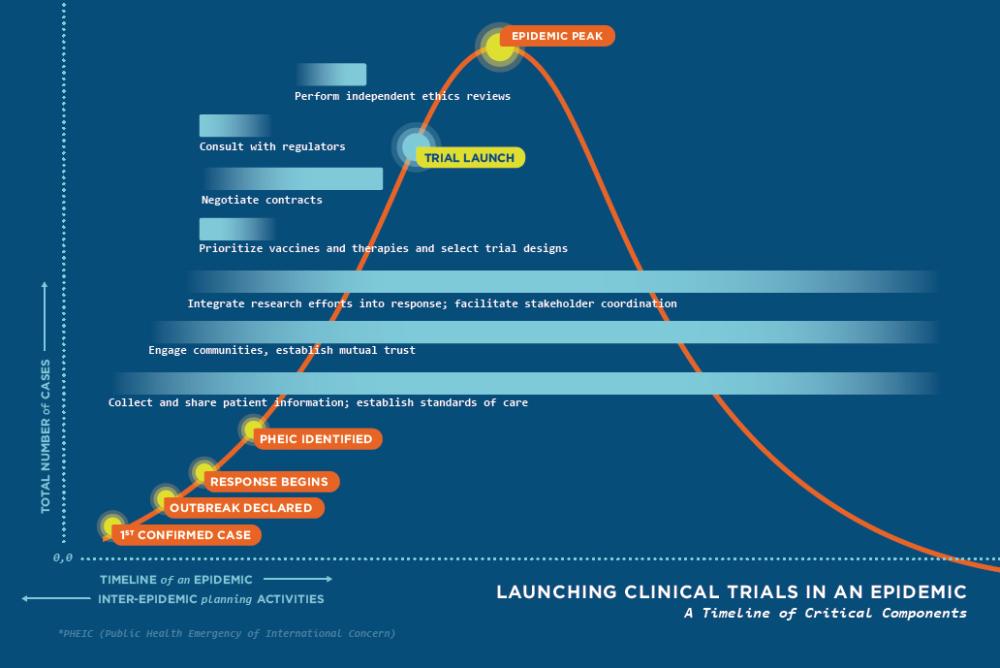

During an outbreak of a serious, untreatable, unpreventable infectious disease like Ebola, research on how to treat or prevent it must be designed, launched, and concluded at breakneck speed. First, more patients fall ill or die with each passing day, so the humanitarian need and political pressure for knowledge both grow exponentially. And second, most outbreaks mercifully are controlled within a few weeks or months, far less time than is usually required for clinical research. As illustrated in Figure 1, taken from the committee report, the clinical trials of new therapeutics or vaccines should ideally be launched before the epidemic peaks, so that there will be sufficient patients to be randomly allocated across treatment and control arms of several different studies.

During the Ebola outbreak, clinical trials were launched too late to yield strong results

As Jerry Keusch said during his presentation, “The rapidity with which [the clinical trials were set up] was remarkable, unprecedented, and not fast enough.”

Figure 2 shows when actual trials of several therapeutics were launched relative to the timing of the epidemic in each of the three countries. In contrast to the ideal shown in Figure 1, none of the trials started before an epidemic peak, and all but one started long after they had peaked. The vaccine trials (see Figure 4-1 in the report) launched even later, when only a few dozen vaccinated patients could possibly be exposed to the disease. With such a late start, resulting in such small samples of infected or exposed subjects, none of the treatment trials and only one of the vaccine trials had sufficient power to reject the hypothesis of zero benefit. (See chapter 3 of the report.)

Figure 2. Actual timing of therapeutic trials in three affected countries relative to reported cases

Little was learned from the delayed Ebola research trials

The presenters and their report argue that, despite prodigious effort, the global health system failed in several respects during the 2014-15 Ebola outbreak. In addition to failing to properly identify the disease as Ebola until March of 2014 (wasting three critical months), the system failed to declare the outbreak a Public Health Emergency of International Concern until August, 2014 (slowing coordination for four more months) and then failed to launch research in a timely manner (as shown in Figure 2 above) and failed to protect the research from political pressure to release premature weak findings (Report, Chapter 3). According to Keusch and the report, none of the five tested treatment drugs conclusively passed the tests required by drug regulatory agencies.

Regarding the vaccine trials, Keusch and the report note that WHO and Merck have publicized the 100 percent point-estimate of efficacy from the “treatment-on-treated” (TOT) analysis, but have omitted mentioning that the 95 percent confidence interval for that estimate is from 100 percent down to as low as 69 percent. Furthermore, according to Keusch and the report, the more conservative and rigorous “intent-to-treat” (ITT) analysis finds efficacy to be only 65 percent with a 95 percent confidence interval ranging from as low as 47 percent up to 91 percent. These wide confidence intervals for even the best performing new pharmaceutical product show how little has been learned by research during the Ebola outbreak despite the tens of millions of dollars expended. (See page 125 in the report. For a good introduction to the distinction between TOT and ITT analysis, see Gertler et al.,Impact Evaluation in Practice, page 39ff.)

Needed institutional reforms

So that the timing of international and national public health initiatives in the next outbreak resembles more Figure 1 than Figure 2, the report and the presenters urge several reforms to institutions, governance, and procedures.

“You need to deliver processes that account for… legitimacy, inclusiveness, authority, and accountability” – David Peters

During the Ebola outbreak, the media covered how widespread fear and mistrust within communities hampered efforts to address the epidemic. Panelist Jeremy Konyndyk recounted the skepticism with which embassy officials perceived the constant influx of research teams. Such stories highlight a main failure of the outbreak response: international actors didn’t thoroughly engage key stakeholders from the start. The NASEM committee proposed an International Coalition of Stakeholders (ICS) and a Rapid Research Response Workgroup (R3W) to improve coordination during any future outbreaks. Both groups would establish “touch points” among vital stakeholders, the former during the inter-epidemic period and the latter during an epidemic. The ICS would gather pharmaceutical companies, civil society organizations, governments, the World Health Organization, foundations, academics, etc. to refine a research agenda and identify capacity gaps in low- and middle-income countries. The R3W would encourage interoperability among legal institutions, ethics review boards, community representatives, and pathogen experts. These two mechanisms would aim to enable earlier engagement and trust-building with communities, something the NASEM report highlighted as particularly important (and listed as one of the first steps in Figure 1).

“We need to ensure that the [produced] technology, be it vaccines, therapeutics, or diagnostics, are affordable, appropriate, and available” – Carrie Teicher

Speaking from the MSF perspective, Carrie Teicher also noted that any new technologies developed through clinical research should be usable by response organizations. MSF could not use an Ebola vaccine to treat patients in the Democratic Republic of the Congo (where the latest Ebola outbreak took place) because the vaccine was not heat-stable. Previous Ebola outbreaks have taken place in rural areas where access to refrigeration is extremely limited, so the need for an “appropriate” vaccine remains a priority.

“[Low quality] patient data was a huge problem for us in the operational response… if you don’t have a good picture about what [the disease] is doing now it’s hard to project what it is going to do” – Jeremy Konyndyk

Based on his experience as the former director of USAID’s Office of U.S. Foreign Disaster Assistance, Konyndyk stressed the need for targeted, but careful message dissemination around the clinical trials and better data collection to inform them. For example, explaining the randomized-controlled trial process to local community members and how some individuals will get treated while others will not, must be done very carefully. Konyndyk also cited the difficulties associated with projecting future disease spread if current patient data is messy. During the outbreak, someone could count as a case when they were first identified as having the disease, when they were admitted to a clinic, when they were transferred to another clinic, and when they passed away. He noted that biometrics, which have been applied successfully in other humanitarian efforts, might be an avenue worth exploring.

Conclusion

Prior to the 2014 Ebola outbreak, the global health community did not have clear answers to questions around the ethics, implementation, and evaluation of clinical trials during epidemics. The committee put together by the National Academies of Sciences, Engineering, and Medicine took the first of many steps needed to provide clear answers. The committee determined that randomized-controlled trials are both ethical and functional during outbreaks, but that communities must first be prepared through meaningful engagement. The committee also stressed that any research must be scientifically rigorous and driven by the needs of a diverse set of stakeholders, including local communities and response organizations, requirements Konyndyk and Teicher echoed in their remarks.

Serious conversations about epidemic-time clinical trials cannot be delayed until the next outbreak. In the case of Zika in the Americas, the dramatic decline in cases forced a Zika vaccine trial to move to more heavily affected areas, but “with so little viral spread, the trial ‘probably has little hope of success.’” The same problem that plagued the clinical trials of the Ebola outbreak—poor timing—persists even two years after the outbreak in West Africa and after many international organizations promised to do better.

It’s time to take epidemic-time clinical research seriously and develop a sound and inclusive strategy around it.

Topics

DISCLAIMER & PERMISSIONS

CGD's publications reflect the views of the authors, drawing on prior research and experience in their areas of expertise. CGD is a nonpartisan, independent organization and does not take institutional positions. You may use and disseminate CGD's publications under these conditions.