Relying on biased information undermines the effectiveness of evidence-based policymaking. A potential source of bias in many datasets is that most of the world’s data scientists—i.e., the people who collect, organize, analyze data, and make decisions—are men. Women hold just 18 percent of data science jobs in the United States, and the problem is worse in most lower-income countries, where women are less likely to have access to the science, technology, engineering, and mathematics (STEM) education that provides a gateway to a career in data science. In addition to increasing the risk of bias, gender imbalances in STEM and data science training make it harder for women to succeed in high-paying professions linked to the digital economy, further widening gender pay gaps.

Today marks both International Women’s Day and the 24-hour virtual Women in Data Science conference, which aims to inspire and educate data scientists worldwide. Now is the time for policymakers, civil society, academia, and other actors to accelerate efforts to get women into data science, further driving progress towards gender equality.

Understanding the risks of biased data

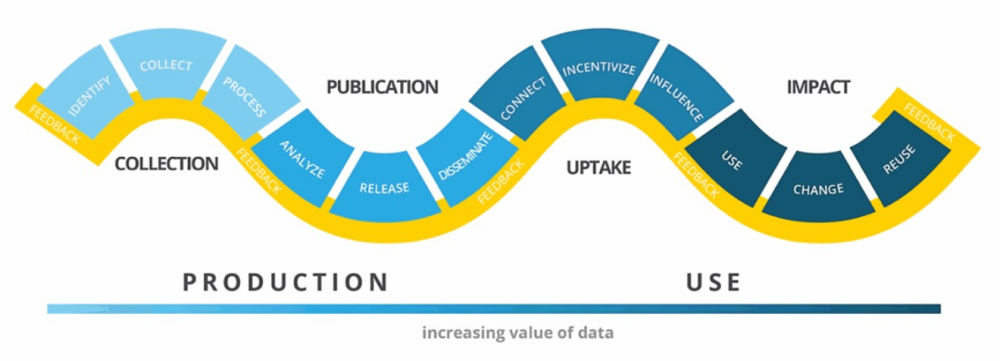

The choices that data scientists make about how to measure, collect, organize, and analyze the data they use influence the insights they derive and open the door to bias at every stage of the data value chain (figure 1). Consciously or not, data scientists embed their values, interests, and life experiences into the data they handle, shaping outcomes in line with their understanding of the world. In this sense, datasets and algorithms can be thought of as “encoded sets of values.”

Figure 1. The Data Value Chain

Source: Open Data Watch and Data2x

The underrepresentation of women in data science increases the risk that data-driven policies will be designed and implemented in ways that harm their interests. Consider the following examples from Carolina Criado-Pérez’ Invisible Women: Exposing Data Bias in a World Designed for Men, which reveal how biased data can harm women and girls:

- Crash test dummies. Historically, the young, male body was used as the standard for designing test dummies for military technologies because women were excluded from major combat roles. This bias was not accounted for when similar test dummies were developed for vehicle safety tests for the general population. This may be one reason why women are more likely than men to sustain serious injuries in car accidents.

- Hiring algorithms. In an early attempt by Amazon to design a computer program to guide its hiring decisions, the company used submitted resumes from the previous decade as training data. Because most of these resumes came from men, the program taught itself that male candidates were preferable to women. While Amazon realized this tendency early on and never used the program to evaluate candidates, the example highlights how relying on biased data can reinforce inequality.

- Safe public restrooms. Women take an average of 2.3 times longer to use the toilet than men, use the toilet more frequently than men, and often have children with them when using the restroom, yet one in three women globally struggle to find safe places to use the toilet. This exposes women (and their children) to health and safety risks, including contracting deadly diseases like diarrhea caused by openly defecating in bushes and bodies of water, and sexual assault due to unsafe restrooms. Relying on gender-balanced data to determine the design and location of public toilets for women would help to address some of these problems.

Once datasets become biased, they are difficult to fix. And instances of bias are more likely to arise when a homogenous group of people is in charge of each stage of the data value chain. One way to reduce bias from the outset is to include a diversity of experiences and perspectives into the teams that work with data.

Around the world, too few women enter pathways that lead to data science

Increasing the number of women in data science means first solving the bottleneck problem. Men greatly outnumber women in data science because the field draws on expertise gained through studying STEM disciplines, including computer science, mathematics, and advanced digital skills, where women’s participation is lacking.

According to UNESCO, globally only 30 percent of female students focus on STEM-related subjects in higher education. Furthermore, only 28 percent are enrolled in information and communication technology (ICT) degree programs, compared to 72 percent of men. These global averages mask significant regional and country differences, with the situation most acute in African countries.

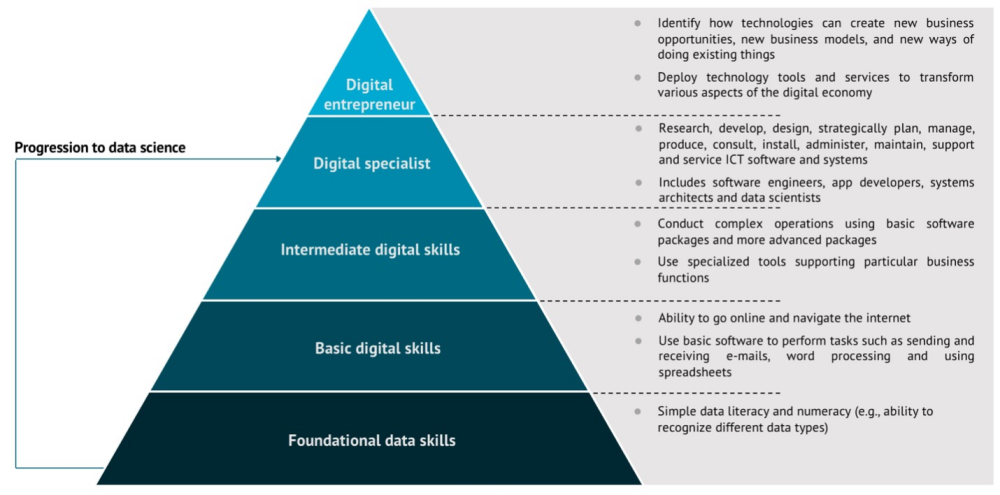

In most countries, a gender gap exists across all levels of the digital skills continuum (figure 2) starting with the basic literacy, numeracy, and digital skills needed to get online and use digital tools. Indeed, on average across all low- and lower-middle-income countries, only 61 percent of women are literate compared to 76 percent of men. As a result, women often struggle to engage with digital technologies effectively. This gap reflects multiple factors including cultural biases discouraging women and girls from using technology, lower levels of income which prevent women from purchasing digital hardware and software, and less educational access.

Figure 2. Continuum of digital expertise

Note: Information used to create the pyramid was curated from the following publications: Prado and Marzal, 2013; Empirica, INSEAD, and IDC Europe, 2013 UNCTAD, 2017; Pathways for Prosperity Commission, 2019

To address the shortage of women data scientists, policymakers should first seek to ensure that women and girls have the foundational skills needed to engage with digital technology, namely basic literacy and numeracy. A policy approach that only seeks to strengthen skills associated with the top layers of the pyramid is unlikely to diversify participation in the digital economy. In fact, it may further entrench existing inequalities, leaving women and girls behind, again.

Building data and digital expertise also has the benefit of providing women with the skills needed to get jobs in a changing workforce. Presently, women-dominated roles such as clerks are being displaced at a high rate by algorithms and machines. This is particularly problematic in low- and lower middle-income countries where more jobs are susceptible to automation. For example, 42 percent of jobs in Ghana are prone to automation, compared to 6 percent in South Korea. Equipping women with data and digital skills will help them compete for the best-paying roles a digital economy has to offer, including data analysts and scientists, AI and machine learning specialists, and software developers.

Fortunately, several initiatives are putting data into the hands of women and girls, equipping them with the skills necessary to move up the skills pyramid and participate in the digital economy, including:

- Stanford University’s Women in Data Science (WiDS) initiative has reached over 100,000 women and girls worldwide working in or interested in the field of data science. WiDS hosts datathons where participants hone their data skills, facilitates a podcast series that features data science leaders, and actively encourages secondary school students to consider careers in data science, AI, and related fields.

- The Millennium Challenge Corporation’s Data Collaboratives for Local Impact (DCLI) initiative, in collaboration with the Tanzania Data Lab, Vodacom, and UNICEF, has increased the participation of young women and girls in Tanzania in data literacy by creating coding and data visualization camps.

- In Côte d’Ivoire, DCLI funded Des Chriffres et des Jeunes—a project dedicated to training young people in data science—established a data fellowship, and showed how women empowered through data skills can help to narrow the gender data divide.

- The Tanzania Data Lab has partnered with the University of Dar Es Salaam and launched the first master’s in data science program in East Africa. The first three cohorts of Tanzanian data scientists have included female experts in blockchain and AI.

- In Bangladesh, Huawei and Robi Axiata support a government initiative to train women and girls in rural areas in basic digital skills by providing them with access to laptops, WiFi, and specialized software. This initiative has already reached 63,000 women and girls and aims to reach an additional 166,000 by 2022.

- Technology Enabled Girl Ambassadors, a mobile-based research app co-created with girls, trains adolescent girls in Nigeria, Malawi, Tanzania, Rwanda, India, and Bangladesh to conduct interview research and data collection with people in their communities.

Women need a seat at the table

Discussions regarding gender and the data value chain have focused on the need to collect more quality gender-and sex-disaggregated data. This work program, driven by organizations like Data2x, UN Women’s Making Every Woman and Girl Count, and the Inclusive Data Charter, seeks to make women and girls more visible in datasets used in evidence-based policymaking.

While these efforts are crucial, more attention needs to be paid to how gender bias can enter at later stages of the data value chain, including analysis and use. A fundamental goal should be that “people who are most affected by governance decisions are in the room, on the team, and are part of the decision-making process.” To ensure that women are well-represented on the teams that analyze and make use of data, we need to create opportunities for girls to start and remain on the STEM pathway and encourage them to build the data skills and digital expertise needed to participate first-hand in the data value chain.

We are grateful for comments provided by Agnieszka Rawa (Millennium Challenge Corporation) and Megan O’Donnell (Center for Global Development).

DISCLAIMER & PERMISSIONS

CGD's publications reflect the views of the authors, drawing on prior research and experience in their areas of expertise. CGD is a nonpartisan, independent organization and does not take institutional positions. You may use and disseminate CGD's publications under these conditions.