Recommended

Does raising test scores in developing countries lead to better health, wealth, and happiness later in life?

Existing evidence provides reason to be bullish on the long-term payoff to literacy and numeracy investments, but causal claims are often tenuous. For instance, we have a solid base of longitudinal studies linking primary school test scores to higher earnings in adulthood, but some obvious confounding factors remain: kids who go to better schools likely enjoy myriad other advantages along the way. Looking at the broader social benefits of girls’ schooling, and focusing on studies with a stronger claim to causality, a 2019 systematic review by the Population Council “found no evidence—not a single study—of the effects of literacy or numeracy on sexual and reproductive health” in low- and middle-income countries.

Nobody would deny that all kids deserve to learn to read and do basic math, at a bare minimum. But in a low-income context facing stark budgetary trade-offs, how much should governments and donors be willing to spend to improve test scores by, say, 0.2 standard deviations? $100 per pupil? $200? More? Or would that money be better spent on cash transfers, vaccines, and a host of other priorities?

To answer those questions, in 2023 we launched CGD’s Return to Learning Initiative to invest in long-term follow-ups to studies that can pin down the causal links. Our criteria were:

- Randomized trials from a developing country setting…

- …of programs that focused narrowly on learning outcomes in primary school, rather than multifaceted programs that invested in nutrition or health,

- …which successfully raised test scores

- …with participants who are now old enough to be in the labor market and starting families

- …and could be successfully tracked today.

After compiling a long list of candidate studies, in 2024 we set out to test the feasibility of that last bullet point – collaborating with research teams in Mali, Liberia, Ghana, and Uganda to track participants from literacy interventions 12-15 years ago.

This post lays out what we’ve learned so far, and what we’re hoping to do next.

The proliferation of education RCTs in developing countries in the 2000s has created a new opportunity to study long-term impacts

By the mid-2010s, more than a dozen randomised control trials (RCTs) had tested interventions aimed at improving literacy and numeracy in low- and middle-income countries (LMICs). Many of the students who participated in these trials are now adults in the workforce. If they can be successfully located today—a big if—they could help answer questions with obvious policy importance, such as: if you learn more in second grade, do you earn more later?

From nearly 40 evaluations, we identified four pilot studies (Table 1). In addition to the criteria above, we looked for studies with big enough sample sizes to help us detect long-term effects, and where the original principal investigators (PIs) and institutional review boards (IRBs) were able to provide access to data and tracking information.

We tested two study designs: longitudinal studies that tracked the same individuals over time, and cross-sectional studies that tested different cohorts at each stage. Since around 40 percent of the evaluations in our database are cross-sectional, devising methods to track outcomes in each design was an important goal.

Table 1. Pilot studies for long-term tracking

| # | Citation | Country | Program dates | Schools | Learning effect | Age, 2024 | Kids tracked? |

|---|---|---|---|---|---|---|---|

| 1 | Lucas, McEwan, Ngware, & Oketch, 2014 | Uganda | 2009-11 | 109 | 0.18 sd | 21.0 | Yes |

| 2 | Piper & Korda, 2011 | Liberia | 2008-10 | 116 | 0.80 sd | 24.5 | No |

| 3 | Duflo, Kiessel, & Lucas, 2024 | Ghana | 2010-13 | 300 | 0.16 sd | 21.5 | Yes |

| 4 | Spratt, King, & Bulat, 2013 | Mali | 2009-12 | 75 | 0.25 sd | 23.0 | No |

Our baseline estimate is that a one standard deviation improvement in test scores should raise earnings by roughly 20-30 percent.

We are doing this work because there is little evidence causally linking early skill improvements to adult outcomes in LMICs. But we are not starting from scratch. Studies in high-income countries have already demonstrated this connection, and related research in LMICs provides additional insights.

We reviewed 17 high-quality studies that link cognitive skills to earnings. This research is summarised in Table 2 and shows that literacy is linked to earnings, but estimates vary widely. Interpreting the range of estimates across locations and study designs is not straightforward.

- The most comparable interventions (those that improved education quality) suggest that a one standard deviation (SD) increase in skills raises earnings by around 15 percent—but these come from only the US and Norway.

- Interventions in the most relevant contexts suggest larger effects, with studies in Ghana and Colombia estimating that each standard deviation increase in skills translates to earnings gains of 40 to 80 percent.

- Observational studies from LMICs suggest an average return of about 25 percent, slightly higher than estimates for high-income countries.

- The strongest causal evidence (experiments that track individuals from early childhood into adulthood) suggests returns of at least 20 percent, often exceeding 40 percent, though these programs typically involved more than just literacy gains.

Despite variation in findings, the evidence strongly suggests that literacy plays a valuable role in shaping future earnings. A reasonable expectation for LMICs might be a return of 25–30 percent per SD increase in literacy skills.

Table 2. The returns to cognitive skills in existing studies

| Type | Study | Location | Intervention | Earnings gain per SD skill increment |

|---|---|---|---|---|

| A | Danon et al. 2024 | Pakistan | None | 24 % |

| A | Evans & Yuan 2017 | Multiple LMIC | None | 36.5 % |

| A | Glewwe et al. 2022 | China | None | 12.9 % |

| A | Hampf et al. 2017 | Multiple OECD | None | 26 % |

| A | Heckman et al. 2015 | Multiple OECD | None | 18 % |

| A | Perez-Alvarez 2017 | Multiple LMIC | None | 28.5 % |

| A | Perez-Alvarez et al. 2023 | Multiple LMIC | None | 16-30 % |

| B | Campbell et al. 2012 | US | Abecedarian | 46 % |

| B | Gertler et al. 2021 * | Jamaica | Stimulation & nutrition | > 90% |

| B | Heckman et al. 2010 | US | Perry Preschool | 21 % |

| B | Thompson 2017 * | US | Head Start | 42 % |

| C | Bettinger et al. 2019 * | Colombia | Private school lottery | 40 % |

| C | Duflo et al. 2023 | Ghana | Secondary scholarships | 81 % |

| D | Chetty et al. 2011 | US | Higher teacher VA | 12 % |

| D | Chetty et al. 2014 | US | Higher quality class | 9 % |

| D | Jackson et al. 2018 * | US | Higher spending | 14 % |

| D | Kirkebøen 2022 | Norway | Higher teacher VA | 20 % |

* These four studies combine results on learning and results on earnings from different papers; the earnings paper is referenced here and the earlier paper in the linked sheet.

Low attrition rates on longitudinal studies are achievable, with some caveats

Fieldwork involved overcoming a range of logistical and ethical challenges, including recovering original personal data and obtaining approvals for re-contacting participants. Collaboration with original PIs—Moses Oketch and Adrienne Lucas—and institutions was crucial in navigating these steps.

To establish high-quality tracking protocols, we reviewed 44 follow-up studies, consulted with experts, and examined several protocols used in longitudinal surveys. These protocols were tested in 10 pilot sites in Uganda and 12 in Ghana, where we attempted to locate and interview all individuals from the original study.

The results exceeded our expectations, with 86 percent of participants tracked in Ghana and 95 percent in Uganda (Table 3).

Table 3. Results from individual tracking in Ghana and Uganda

| Country | Pilot sites | Interview targets | Number tracked | Of which: deceased | Number not tracked | Tracking rate |

|---|---|---|---|---|---|---|

| (A) | (B) | (C) | (D) | (B)-(C) | (C)/(B) | |

| Ghana | 12 | 284 | 245 | 8 | 39 | 86% |

| Uganda | 10 | 636 | 606 | 8 | 30 | 95% |

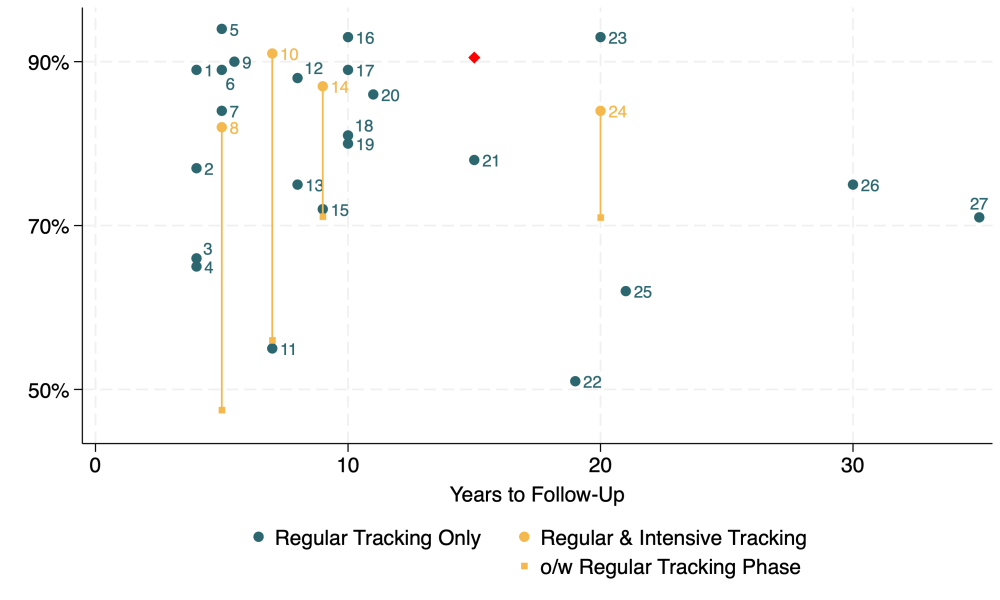

For comparison, the mean tracking rate across 27 attempts to follow-up participants from experimental studies in low- and middle-income countries was 80 percent (Figure 1). Of the seven studies with an equal or longer follow-up period, only one achieved a higher rate—93 percent after 20 years in Mexico (Araujo & Macours, 2021).

Figure 1. Tracking rates in long-run follow-ups of experiments in low- and middle-income studies (plus our simple mean rate in red)

That said, we also identified clear limits to when and where longitudinal tracking seems feasible.

Many RCTs in our database were cross-sectional, meaning participant names were never recorded. We tested whether we could reconstruct relevant cohorts from school rosters, but missing records and inaccessible schools made this infeasible.

In Liberia, only 16 of the 40 rosters were found (either at schools or from staff who had worked there), leading to high sample attrition. In Mali, security issues and school closures made follow-up impossible in at least one-third of schools. These results suggest that future efforts should prioritise longitudinal studies where individual data is available.

Few education RCTs are powered to study long-term impacts, suggesting the need to pool multiple studies

Finding individuals long after an intervention is encouraging, but it does not automatically mean we can measure the effects we care about. The original studies were never intended to provide evidence on long-term outcomes in isolation. But we’re increasingly confident that the solution lies in combining data from multiple studies.

While we lost two studies (Liberia and Mali) from our pilot, this does not fundamentally weaken our approach. In fact, we can replace them with a stronger study from India, specifically a follow-up of a 2013–14 Pratham learning camp in Uttar Pradesh. This intervention showed large short-term effects (0.7 standard deviations), similar to those measured in Liberia. With a sample covering 245 schools, a follow-up study would be a valuable addition to the initiative.

With a small number of studies, estimating a pooled effect is inherently difficult. We’ve been carefully working through the best way to combine data from three studies, running numerous simulations under different model assumptions and outcome definitions. Rather than focusing on the specific details of each intervention, our core interest lies in understanding the link between early literacy and later-life outcomes.

Pooling across three studies, our estimates suggest that if literacy effects are consistent across contexts, we are powered to detect a 0.15–0.20 standard deviation (SD) increase in earnings for a one SD improvement in literacy. However, if there is moderate variation in effects across studies, the detectable impact increases to 0.30–0.35 SD.

These are sizable impacts, but they align with observed relationships between test scores and later-life outcomes, including earnings effects in datasets like the Kenya Life Panel Survey (0.24 SD), and in the studies previewed earlier in this blog. Similar associations are seen in test-score-to-schooling associations from longitudinal data from Ethiopia, India, Pakistan, Peru, Vietnam, and Kenya (ranging from 0.31 to 0.53 SD).

There is still room for improvement, particularly in how we anticipate and model effect size differences between pooled studies. Over the next year, we will explore how Bayesian methods can improve our synthesis of findings across a small number of studies.

Next steps: Expanding tracking, improving methods, and informing policy

Full-scale tracking will soon begin in Ghana and Uganda, taking advantage of the research infrastructure built during the successful pilot phase. But we’re also looking for more studies to track. We’re currently working with Pratham and the original PIs to explore a follow-up to Esther Duflo, Rukmini Banerji, Abhijit Banerjee, Mark Berry, Harini Kannan, Shobhini Mukerji, Marc Shotland, and Michael Walton’s evaluation of Pratham’s 2013–14 learning camps in Uttar Pradesh.

Several related efforts are also underway, each exploring long-term outcomes of early learning interventions—though they differ in terms of intervention type, context, and the age of participants now being followed. These include:

- Jishnu Das and colleagues, who are continuing to track the 2003 Learning and Education Achievement in Punjab Schools (LEAPS) cohort—now in their 20s and 30s—to investigate the long-run links between schooling, early skills (some shaped by an RCT), and a broad range of adult outcomes;

- Alex Eble and colleagues, who are following up, seven years on, with participants in highly effective early learning programmes in The Gambia and Guinea-Bissau;

- Jason Kerwin, Rebecca Thornton, and colleagues, who are tracking individuals who benefited from the highly effective Northern Uganda Literacy Project, first launched in 2009 and scaled in 2014;

- Jacobus Cilliers and colleagues, who are tracking children who took part in South Africa’s Early Grade Reading Study, (and having already reported results on the persistence and emergence of literacy skills some four years post intervention).

Many of these teams are already working on collaboration to develop common tracking approaches and measurement instruments so that when results come in, it’ll be possible to make meaningful cross-context comparisons.

Combining all of these efforts, we’re hopeful that within two to three years, policymakers will have a much better idea about the long-run payoff to improving basic literacy and numeracy skills.

For now, stay tuned, and please watch this space for more updates on Return to Learning.

DISCLAIMER & PERMISSIONS

CGD's publications reflect the views of the authors, drawing on prior research and experience in their areas of expertise. CGD is a nonpartisan, independent organization and does not take institutional positions. You may use and disseminate CGD's publications under these conditions.

Thumbnail image by: GPE/Livia Barton