Recommended

Earlier today the West African Secondary School Certificate Examinations (WASSCE) were suspended across Anglophone West Africa due to the threat of COVID-19. Students have been required to vacate schools, uncertain when they will return to earn their leaving credentials. The cancellation of the exams and the broader health and societal effects of COVID-19 are unwelcome and worrying. But the cancellation does provide an opportunity to take a closer look at the exams and make sure that—when students do return—they will face a fair test.

The WASSCE stakes are always high. These exams certify student achievement; they enable objective and transparent student placements into the job market, universities, and for scholarships; and they offer a summary measure of the effectiveness of education in Ghana, Liberia, Nigeria, Sierra Leone, and The Gambia.

All of these uses rest on an important assumption—that the WASSCE is a reliable measure of achievement, with results that are comparable over time. But historical trends suggest otherwise, with potentially major implications for countries’ policy choices and for fairness for hundreds of thousands of students.

There’s no explanation for large variations in achievement from year to year

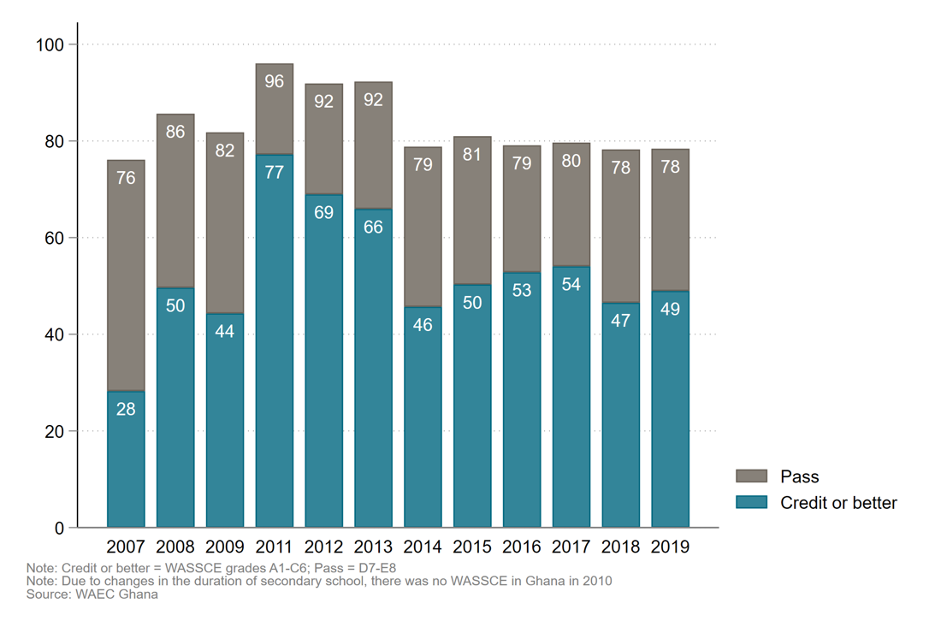

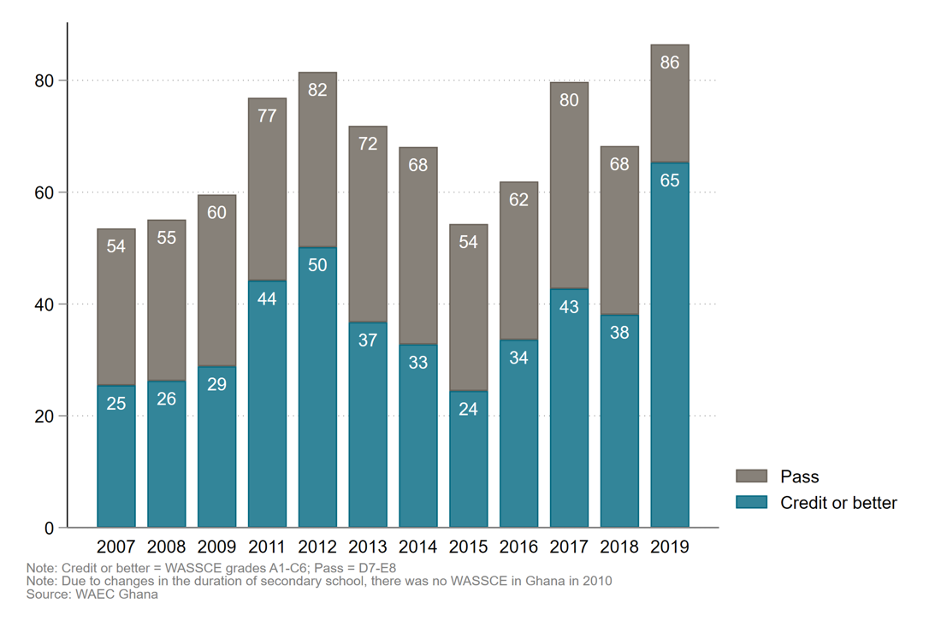

In principle, the WASSCE pass mark is fixed over time: candidates must achieve a minimum score of 40 percent in at least five subjects, including English and mathematics. But one striking feature of the results is that pass rates can fluctuate—dramatically—from year to year. In Ghana (figure 1), for instance, the pass rate in English has remained fairly stable over the past six years, but for mathematics, in contrast, it has varied between 54 percent and 86 percent over the same period (figure 2).

Figure 1. Ghana’s WASSCE Core English performance (percent)

Figure 2. Ghana’s WASSCE Core Mathematics performance (percent)

On the face of it, large year-on-year fluctuations in achievement may be explained by rapid shifts in quality of teaching, student motivation, and so on. So, in an exploratory paper, Abreh, Owusu & Amedahe (2018) look at Ghana’s WASSCE performance since 2007 and try to explain changes in achievement. They find that the relatively high performance in 2011 and 2012 is partly due to a nation-wide shift to four-year senior high school program with an intensive preparatory year on top of the usual three. Curriculum changes in 2010 also mean that comparisons before and after this year are more difficult to make. But the authors struggle to find factors at the student, teacher, or school levels that get close to explaining the sharp changes in mathematics achievement since 2012.

Another possible explanation for the variation in pass rates is that the test is not comparable over time. If that is true, then it has obvious implications for the fairness and efficiency of the national examination system, particularly the fairness of entry to tertiary institutions, and losses to the economy from the misallocation of talent. And if the WASSCE does not measure what students ought to be learning, then does it reward—and perhaps incentivize—the wrong things in the classroom?

Why does this matter so much in 2020?

The stakes in the WASSCE examinations are high. Students spend, collectively, millions of hours each year cramming for tests that may have limited validity. Unreliable exams blunt the incentives for human capital generation, incentivize bad pedagogy in schools, and undermine employers’ faith in school degrees as a signal of human capital—with potential macro implications for the labor market and economy as a whole.

If exams are not comparable, it is plausible that tens of thousands of candidates who might have earned a qualification if they had taken the WASSCE in 2019 fail to do so simply because they took it in 2018. Although this matters less for students vying for limited university places in any single year (since they will have taken the same-difficulty test in the same year), if candidates from multiple WASSCE cohorts, or from a November re-sit, apply for the same spaces then differences in test difficulty can have a meaningful effect on who enters.

At the national level, governments and civil society use WASSCE as a monitoring mechanism. The publication of results is big news, making the front page of newspapers from Nigeria to Liberia. Reports of unsatisfactory results influence education policy and financing, having led Ghana’s Ministry of Education to (repeatedly) probe ‘‘unsatisfactory results for Mathematics and English Language” and what they called a “massive failure by our students,” to identify reforms that may improve future performance. And in 2020, Ghana’s results will inform judgments on whether the senior high school fee abolition policy (“Free SHS”) has been an effective way to rapidly increase access, or the cause of a decline in quality at the secondary level.

This is why comparability over time is so important. Major policy decisions are based on the exams, as are significant spending decisions. The Ghana Education Service is under fire for spending money to procure 400,000 sets of questions and answer booklets to support candidates preparing for the 2020 WASSCE (which we still expect to see happen!); and Vice President Mahamadu Bawumia said that the Ghanaian government had spent 56 million cedis (approximately 10 million USD) to help exam candidates excel this May, before today’s cancellation.

Today’s WASSCE cancellation is deeply unfortunate for students and for the education system at large (as it is for the students at all levels who will be missing out on the benefits of education as schools across the region close). But it also creates a moment for the West African Examinations Council and the five governments that administer the WASSCE to take a look at the tests’ potential flaws and improve them before another set of students sit for exams. To support that, we are building on earlier research to empirically assess reliability and validity of WASSCE examinations. We have administered a set of hybrid exams, comprised of items from multiple past WASSCE exam papers, to a fixed sample of students, allowing us to link scales across multiple years and test for changes in difficulty over time. Watch this space for findings.

With thanks to Aisha Ali, Lee Crawfurd, Dave Evans, Alexis Le Nestour, & Justin Sandefur for helpful comments.

DISCLAIMER & PERMISSIONS

CGD's publications reflect the views of the authors, drawing on prior research and experience in their areas of expertise. CGD is a nonpartisan, independent organization and does not take institutional positions. You may use and disseminate CGD's publications under these conditions.