Recommended

UNESCO tells us that only one in seven low- and middle-income countries knows how much learning has been lost due to COVID school closures. Where there has been no measurement, simulations of learning loss are beginning to take the place of empirical facts. Yet countries examine millions of kids each year in highly standardised formats—can’t we use these test results to understand how learning outcomes are changing?

Governments (and students) pay for public exams to maintain standards, and use results to track performance. Of the 55 education sector plans hosted by the Global Partnership for Education, 34 include targets for exam results in their key performance indicators. If governments intend to use them in this way, then exam results should also be a decent candidate for measuring the lasting effects of COVID on learning.

But newly compiled data raise questions about using exam results for this purpose. In several cases, they may not be well suited to monitoring performance at all.

High-stakes exams dominate assessment and drive public debate around how well individual schools—and types of schools—are performing. But we’ve barely scratched the surface on how to use exam data to monitor or improve outcomes. Supporting countries to conduct high-quality public exams should be a priority.

Exam results dance around from one year to the next

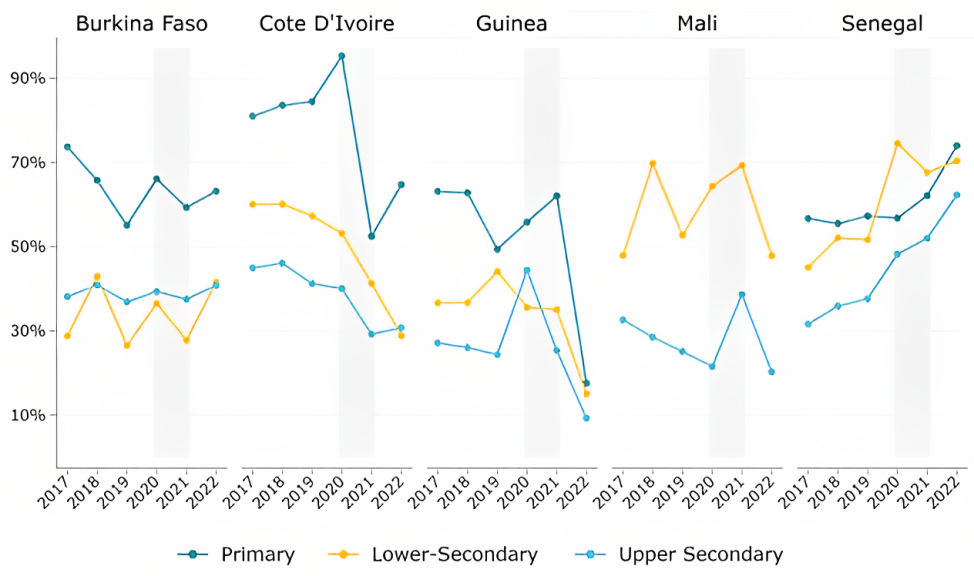

Exam pass rates tend to fluctuate significantly year on year. While expanding our exams database, we’ve mapped primary and secondary level pass rates for several countries over the past six years. Our goal was to determine how participation and performance have changed since 2020 using these test results. And we see sudden improvements, sharp declines and large overall changes in exam outcomes throughout the period.

Changes brought on by COVID do play a role in some cases, but certainly can’t explain everything. For example, Côte d’Ivoire allowed nearly all kids to clear the primary exam in 2020 and then clamped down in 2021. Yet in Côte d’Ivoire, as elsewhere, swings of up to 10 or 20 percent occurred annually before 2020 and have continued since. There are very likely changes in the stringency of pass hurdles too.

These fluctuations fuel public calls for reform, and routinely influence policy discussions. But without a clear understanding of the origin of year-to-year changes, it's difficult to draw any conclusions about the impacts of policies. How much of this variation represents genuine changes in student preparation, and how much is due to shifts in the examination process or how grades are awarded?

Figure 1: Exam results danced about before school closures and have done so since

Note: Mali removed the Primary School Leaving Exam (Certificat d'Études Primaires) in 2010. All the exams represented have been postponed by a few months in 2020, with the exception of the primary exam in Cote d’Ivoire, which was cancelled. Exams were postponed by anywhere between one to three months. Source: data on exam candidates and results, as reported by examination agencies in each country and recorded in our database.

If you can’t compare then you can’t track performance, nor hold anyone to account

High-stakes exams help governments to maintain educational standards and track performance, but their usefulness relies on comparability over time. A recent survey of policymakers shows that they are generally poorly informed about the levels of learning in their countries. That’s less surprising when the backdrop is exam pass rates bouncing between 48 and 73 percent (Mali, lower secondary), or falling from more than 60 percent to less than 30 percent in the space of five years (Côte d’Ivoire, lower secondary).

Highly variable pass rates undermine the goal of orienting education systems around learning outcomes too. Policymakers are operating in a system where average performance appears to change significantly from year to year. This makes it difficult to build accountability for educational performance—how do you do that when exam pass rates vary by 10, 20, 30 percent from one year to the next?

Regular test data are more valuable to governments than large-scale assessments—and they’re already being paid for

One solution may be to increase the frequency or coverage of regional learning assessments. This is happening, with the next wave of PASEC expanding its reach and due in 2024. While these sample-based surveys offer many benefits, such as driving global monitoring and advocacy efforts and enabling comparisons based on student background factors, they are infrequent and often expensive. For the countries shown above, the shortest gap between large-scale regional or international assessments is five years and the largest gap is 13 years. Governments need more regular performance indicators.

High-quality exam data that cover all students can be particularly valuable to policymakers who need to see more than a national or regional average. Annual, population-level data can be disaggregated to district or school levels to identify local changes in performance. These can then be combined with other large datasets, including on the distribution of teachers, or indicators of deprivation. They also measure what surveys may miss: general equilibrium effects of policies, and government implementation challenges.

Supporting countries to conduct high-quality public exams should be a priority

Despite the widespread use of high-stakes public exams in primary and secondary schools, little effort has gone into scrutinising their quality or how results are used. This includes their implications for education policy and labour markets, as well as the quality of the test processes. Existing research that has looked at test instruments, has revealed poor quality items and limited alignment with the curriculum.

High-stakes exams are presented as the “fairest and best system available to us” for filtering among candidates, and for increasing opportunities for students from disadvantaged backgrounds. To fulfil these functions, an examination should be unpredictable, but not chaotic. With the level of variability that we see here, and document elsewhere, it’s unlikely that opportunities are being distributed efficiently, or equitably.

The cost of assessing outcomes systematically is extremely low relative to the overall cost of providing schooling. Investments in well-designed national examination systems can provide cost-effective insights into a wide range of questions. Supporting countries in delivering high-quality public exams should be prioritised.

With thanks to Lee Crawfurd and Rita Parakis for helpful comments.

DISCLAIMER & PERMISSIONS

CGD's publications reflect the views of the authors, drawing on prior research and experience in their areas of expertise. CGD is a nonpartisan, independent organization and does not take institutional positions. You may use and disseminate CGD's publications under these conditions.

Thumbnail image by: Riccardo Niels Mayer/Adobe Stock