Recommended

The PASEC education assessment generates benefits that far exceed its costs through four channels: (1) triggering policy reform, (2) guiding education spending, (3) enabling research, and (4) informing donor programmes. But arguing that something is worth funding is different from arguing that it is well run. PASEC faces real challenges: rising costs, delayed reports, uneven data access, and a growing gap between what it tries to do and what it can realistically deliver. If the programme is to survive the withdrawal of Agence Française de Développement (AFD)’s financial support and secure new funding, it needs to show that it can evolve.

We made the economic case for PASEC in our first blog post, so this one explores how to maximise its value across each channel. Our ideas draw on comparisons with other assessment programmes, on conversations with stakeholders across governments and donor agencies, and on practical experience working with the programme. None require reinventing PASEC. Most require making deliberate choices about what the programme should and should not be, and how to make its core missions as effective as possible.

1. Maximize the PASEC shock

The reform shock is PASEC's most powerful channel. When results reveal uncomfortable truths about an education system, they can trigger a political response that leads to large-scale reform. Following Brazil's last-place finish in the 2000 PISA round and Peru’s in 2012, shock-inspired reforms in both countries produced impressive learning gains over the following decade. The best examples in Africa to date are Niger, following the 2014 PASEC round, and Côte d'Ivoire, following the 2019 PASEC round.

The shock mechanism depends on several conditions that PASEC can influence:

Speed matters most. Education ministers often rotate in Francophone Africa (and Latin America). Policy windows open and close quickly. A PASEC report that arrives two or three years after data collection may find that the reformist minister who commissioned it has moved on and that the political opening has passed. PISA publishes its main results roughly 11 months after the end of data collection. TIMSS and PIRLS follow a similar timeline. PASEC should aim for a two-track model: a first set of key findings, presented as short policy briefs with clear visuals, released within six to twelve months of data collection. The full technical reports, which require more time for psychometric analysis and quality assurance, would follow later. The point is to ensure that results enter the policy conversation when they are most relevant. A media strategy like PISA’s to blanket the airwaves with test results, commentary, and insights from key education policy figures and researchers as soon as the first results are available would have a big payoff.

Format matters too. PASEC’s reports are comprehensive, technically rigorous documents. They are also long and largely inaccessible to the policymakers and journalists who are the programme's most important audience. A communications strategy that produces targeted, audience-specific outputs (data visualisations, country-level summaries, comparison tools) would multiply the programme's impact at relatively low cost. Brazil's IDEB translates complex assessment data into a single index for every school and municipality in the country. PISA's country notes are two-page documents that ministers can read in the car on the way to a press conference. PASEC does not need to copy these models, but it needs to take the communications challenge as seriously as it takes the measurement challenge.

Cross-country comparison is the trigger. There is evidence from PISA and elsewhere that cross-national assessments are politically more potent than domestic ones precisely because they expose performance relative to peers. This is what causes the shock. PASEC should lean into this comparative dimension: presenting results in ways that make cross-country patterns immediately visible, highlighting which countries are improving and which are falling behind, and producing materials that national media can pick up without needing a statistics degree to interpret.

Post-publication follow-up closes the loop. A PASEC shock is only valuable if it leads somewhere. The programme has experimented with roadmaps in some countries, translating results into concrete policy actions with follow-up mechanisms. Scaling this approach, perhaps through structured partnerships with ministries and development partners in the months following publication, would increase the likelihood that the results lead to reform rather than sit on a shelf. Having a panel of experts lined up to make the case for specific reforms implemented in the countries making the most progress would help inspire and guide reformist ministers.

2. Make PASEC data more useful for planners

Education planners in PASEC countries allocate roughly $14.5 billion per year across competing priorities. PASEC data help them make better decisions by providing diagnostic information on where learning is and is not happening. But the utility of the data depends on how it is packaged and delivered.

Subnational disaggregation is what planners need. National averages tell a minister whether the system is improving overall, but don’t tell a regional director where to target resources. Several PASEC countries have requested larger sample sizes to allow for subnational breakdowns, and this is where PASEC data become directly actionable for planning. The trade-off is cost: larger sample sizes are more expensive. PASEC needs to be strategic about where subnational disaggregation adds the most value, rather than uniformly expanding sample sizes. In countries where regional disparities are large and well-documented, such as Nigeria, even modest disaggregation can sharpen resource allocation. In smaller or more homogeneous systems, national estimates may be sufficient.

Sampling strategies deserve fresh scrutiny. In some countries, sample sizes have grown over successive PASEC cycles, partly driven by disaggregation requests and partly by high intra-class correlations (the degree to which students within the same school perform similarly), reducing the statistical efficiency of school-based sampling. Understanding why these correlations are high and whether they reflect real features of the education system or artefacts of test design could lead to more efficient sampling without sacrificing precision.

Availability and accessibility of results matter. Sector planning teams and district education officers rarely read 300-page technical reports. The PASEC programme could produce country-specific planning briefs presented in user-friendly, accessible formats: tables showing regional performance, trend data, and correlations with observable inputs like teacher qualifications or textbook availability. These low-cost outputs would make the same data more useful.

Interoperability between PASEC and national data systems would multiply the value. Education Management Information Systems (EMIS) across the region are often focused on counting inputs rather than measuring outcomes. PASEC learning data, linked to EMIS administrative records, would create a much richer picture for planners. This is technically feasible and has been done in other contexts (Brazil's Censo Escolar is linked to SAEB assessment data), but requires deliberate investment in interoperability.

3. Spur more research

For many questions, PASEC is the only available dataset on education quality in Francophone Africa. This region produces a fraction of the research output of comparable-sized Anglophone countries. At least 20 published studies have used PASEC 2014 or 2019 microdata, and the pace is accelerating. But the availability of data remains uneven, which limits the research value PASEC generates.

The principle is simple: Publicly funded PASEC data are a public good. Researchers report delays in obtaining datasets, inconsistent documentation, and restricted access to some variables. Datasets should be made available within a defined timeline after quality checks, along with clear documentation, codebooks, and reproducibility standards. PISA makes all microdata available for download shortly after the results are disseminated. TIMSS and PIRLS do the same through the IEA's data repository. PASEC should follow this model.

Better documentation would lower the barrier to entry. Many potential PASEC data users, particularly researchers based in Francophone Africa, face practical obstacles: unclear variable definitions, missing codebooks, and inconsistent coding across waves. A modest investment in data curation—standardised variable names, trilingual documentation, harmonised datasets across cycles—would significantly expand the research user base.

Partnerships with regional universities could build a research ecosystem. PASEC data are currently used mostly by researchers at institutions in Europe and North America. Building relationships with universities in Dakar, Abidjan, Ouagadougou, and Kinshasa through data workshops, joint research programmes, or thesis partnerships would help develop a Francophone African community of researchers working on education evidence. This serves both the research channel and the broader capacity strengthening mission.

A research programme would focus attention on the highest-value questions. Rather than waiting for external researchers to discover the data, the PASEC programme could identify priority research questions emerging from each cycle and actively commission or encourage studies on those topics. The new African Fellows program, launched by Stanford professor Eric Hanushek and the Yidan Foundation, is a natural for this kind of partnership. The program can link sub-Saharan Africa’s most promising young education economists with high-priority research topics and built-in funding. Some international assessments do this through competitive research grants or partnerships with academic networks. The cost is small relative to the visibility and policy relevance it generates.

4. Serve donors better

Collectively, international donors channel roughly $1.8 billion per year to education in PASEC countries. PASEC data are already embedded in World Bank project appraisals, Global Partnership for Education (GPE) country programme documents, and AFD project frameworks. Strengthening this channel means using PASEC data to inform programme design, monitoring, and evaluation.

Timeliness is critical for donors, too. Donor project cycles are typically four to five years. If PASEC results arrive too late in the project cycle, they cannot inform mid-term reviews or the design of the next phases. The faster publication schedule discussed above would serve donors as much as it serves governments.

Cost transparency strengthens the case for funding. The PASEC secretariat centrally manages about 63 percent of the total programme costs. Greater transparency about how these funds are allocated, including the share spent on external technical partners for psychometrics and test development, would strengthen accountability and help build the case for continued donor support. Donors are more likely to fund a programme that can show exactly what each dollar buys.

Building in-house technical capacity reduces long-term costs. A significant share of the PASEC’s central budget goes to external contractors for psychometric analysis and test development. Over time, strengthening this capacity within the secretariat would reduce dependency on expensive external support, lower costs, and give donors confidence in the programme's sustainability.

Alignment with donor results frameworks. PASEC could be more proactive in working with donors to ensure indicators, benchmarks, and reporting timelines align with the results frameworks of major education programmes in the region. This does not mean tailoring the assessment to donor preferences, but it does mean making it easier for donors to use PASEC data in the formats they need.

The final question: what should PASEC be?

All four channels depend on a question that the PASEC has not fully answered: what is the programme's core mission?

PASEC is currently several things at once: a cross-national assessment, a capacity strengthening programme for national evaluation teams, a substitute for national assessments in countries that lack them, and a vehicle for SDG monitoring. Each role is valuable. The problem is that pursuing all of them simultaneously at its current scale and level of financing creates tensions that are becoming harder to manage.

If PASEC's primary mission is to trigger policy reform and inform public debate (channel 1), then speed and communications matter more than comprehensiveness. If the primary mission is to serve as a planning tool (channel 2), then large sample sizes and subnational disaggregation make sense, but this drives up costs. If enabling research is a priority (channel 3), then open data and documentation are essential investments. If serving donors is central (channel 4), then alignment with donor timelines and reporting formats matters most.

These missions are not mutually exclusive, but PASEC cannot pursue all of them at the same level of ambition with its current resources. The most successful international assessments have stayed focused. PIRLS has measured reading at one grade level for over two decades, resisting pressure to expand. Several years ago, PISA tried to add a household application to catch students who were no longer in secondary school, but retreated from this after finding the costs and administrative complexity too great; therefore, it restored its focus to its core mission—assessing the reading, math, and science skills of 15-year-old students.

PASEC, by contrast, has expanded from one grade level and two subjects in 2014 to three grade levels and six subject-grade combinations in 2025, while also adding teacher assessments, parent surveys, and contextual questionnaires. Data on teacher quality and parental attitudes, involvement, and education spending are all extremely valuable, but this raises the cost of data collection and spreads the programme thin.

PASEC today has two clear areas of comparative advantage. First, it is the only measure of early grade literacy and numeracy that has statistical validity across countries and over time. Oral testing is inherently time-consuming and costly. Other available assessments cannot match the psychometric qualities and administration protocols that make PASEC’s second-grade oral tests valid for programme evaluations and global monitoring. In the absence of a clear commitment to PASEC’s expansion, the UN’s SDG Technical Committee dropped the measurement of grade 2 skills for global SDG monitoring in 2025. Given the donor focus on foundational skills and the large volume of funding at that level, there is a compelling case for building on PASEC’s capacity despite the high costs of oral testing.

The second area is PASEC’s growing country coverage. It has expanded steadily beyond Francophone Africa and now includes Lusophone Africa—Mozambique and São Tomé—and Anglophone Nigeria. Nigeria was included in the PASEC 2025 cycle, which will generate the first comparable learning data for 55 percent of sub-Saharan Africa’s children. Anyone looking for the most cost-effective way to expand learning measurement in Africa would be hard-pressed to find a better strategy than building on PASEC, with an immediate focus on getting as many countries in Anglophone Africa to join the 2028 cycle.

Articulating these and other priorities in dialogue with the multilateral banks, bilateral donors, and major foundations could lead PASEC to a clearer, more strategic identity that would enable better decisions about what to measure, how to report, how much to spend, and what to ask donors to fund. It would also make it easier to communicate the programme's value to both governments and funders.

The path forward

None of these reforms requires PASEC to become a different programme. They require it to become a more deliberate one: faster at delivering results, more open with its data, more strategic about communications, and with clearer international support for its mission.

The timing is urgent. AFD's and Swiss DDC’s withdrawal from secretariat funding creates a financing gap that will need to be filled. The World Bank, GPE, the Gates Foundation, bilateral supporters, and PASEC's member governments all have a stake in the programme's survival. But donors are more likely to step in if they see a programme that is reforming itself, can articulate what it delivers and at what cost, and has a credible plan to do more with what it has.

The economic case for PASEC is strong. At nine cents per child per year, PASEC is one of the most cost-effective investments available in African education. The question now is whether the programme and its stakeholders can act quickly enough to protect that investment and make it even more valuable.

The authors are grateful to Jack Rossiter, Luis Crouch, Abdullah Ferdous, and Clio Dinthilac for their ideas and suggestions.

L’évaluation PASEC génère des bénéfices qui dépassent largement ses coûts à travers quatre canaux : (1) déclencher des réformes, (2) orienter les dépenses d’éducation, (3) permettre la recherche, et (4) informer les programmes des bailleurs. Mais démontrer qu’un programme mérite d’être financé n’est pas la même chose que démontrer qu’il fonctionne bien. Le PASEC fait face à de vrais défis : des coûts en hausse, des rapports publiés tardivement, un accès inégal aux données, et un écart croissant entre ses ambitions et ce qu’il peut raisonnablement réaliser. Si le programme veut survivre au retrait du soutien financier de l’Agence Française de Développement (AFD) et obtenir de nouveaux financements, il doit montrer qu’il est capable d’évoluer.

Nous avons présenté l’argumentaire économique du PASEC dans notre premier blog, celui-ci explore donc comment maximiser sa valeur à travers chaque canal. Nos idées s’appuient sur des comparaisons avec d’autres programmes d’évaluation, sur des échanges avec des acteurs au sein des gouvernements et des agences de coopération, et sur une expérience pratique du programme. Aucune ne nécessite de réinventer le PASEC. La plupart implique de faire des choix délibérés sur ce que le programme devrait et ne devrait pas être, et sur la manière de rendre ses missions essentielles aussi efficaces que possible.

1. Maximiser le choc PASEC

Le choc des résultats est le canal le plus puissant du PASEC. Lorsque les résultats révèlent des vérités dérangeantes sur un système éducatif, ils peuvent déclencher une réponse politique menant à des réformes de grande ampleur. Après la dernière place du Brésil au PISA 2000 et celle du Pérou en 2012, les réformes inspirées par le choc ont produit des gains d’apprentissage impressionnants dans les deux pays au cours de la décennie suivante. Les meilleurs exemples en Afrique à ce jour sont le Niger après le PASEC 2014 et la Côte d’Ivoire après le PASEC 2019.

Le mécanisme du choc dépend de plusieurs conditions que le PASEC peut influencer :

La rapidité est essentielle. Les ministres de l’Éducation changent fréquemment en Afrique francophone (et en Amérique latine). Les fenêtres d’opportunité politique s’ouvrent et se ferment rapidement. Un rapport PASEC qui arrive deux ou trois ans après la collecte des données risque de trouver que le ministre réformateur qui l’avait commandé est parti et que l’ouverture politique est passée. PISA publie ses principaux résultats environ 11 mois après la fin de la collecte. TIMSS et PIRLS suivent un calendrier similaire. Le PASEC devrait viser un modèle à deux volets : un premier ensemble de résultats clés, présentés sous forme de notes de politique concises avec des visuels clairs, publiés dans les six à douze mois suivant la collecte. Les rapports techniques complets, qui nécessitent plus de temps pour l’analyse psychométrique et le contrôle qualité, suivraient plus tard. L’enjeu est de s’assurer que les résultats entrent dans le débat politique quand ils sont les plus pertinents. Une stratégie médiatique inspirée de celle de PISA, inondant les médias de résultats, commentaires et analyses de figures clés de la politique éducative et de chercheurs dès la publication des premiers résultats, aurait un impact considérable.

Le format compte aussi. Les rapports du PASEC sont des documents complets et techniquement rigoureux. Ils sont aussi longs et largement inaccessibles pour les décideurs et les journalistes, qui sont le public le plus important du programme. Une stratégie de communication produisant des contenus ciblés et adaptés à chaque audience (visualisations de données, synthèses par pays, outils de comparaison) démultiplierait l’impact du programme à un coût relativement faible. L’IDEB brésilien traduit des données d’évaluation complexes en un indice unique pour chaque école et chaque municipalité du pays. Les notes par pays de PISA sont des documents de deux pages qu’un ministre peut lire en voiture en se rendant à une conférence de presse. Le PASEC n’a pas besoin de copier ces modèles, mais il doit prendre le défi de la communication aussi au sérieux que celui de la mesure.

La comparaison entre pays est le déclencheur. Il existe des données probantes, issues de PISA et d’ailleurs, montrant que les évaluations internationales sont politiquement plus puissantes que les évaluations nationales précisément parce qu’elles exposent la performance relative aux pairs. C’est ce qui provoque le choc. Le PASEC devrait pleinement exploiter cette dimension comparative : présenter les résultats de manière à rendre les tendances entre pays immédiatement visibles, souligner quels pays progressent et lesquels décrochent, et produire des supports que les médias nationaux peuvent reprendre sans avoir besoin d’un diplôme en statistiques pour les interpréter.

Le suivi post-publication boucle la boucle. Un choc PASEC n’a de valeur que s’il mène quelque part. Le programme a expérimenté des feuilles de route dans certains pays, traduisant les résultats en actions politiques concrètes avec des mécanismes de suivi. Généraliser cette approche, peut-être à travers des partenariats structurés avec les ministères et les partenaires au développement dans les mois suivant la publication, augmenterait la probabilité que les résultats mènent à des réformes plutôt qu’ils ne restent sur une étagère. Disposer d’un panel d’experts prêts à plaider pour des réformes spécifiques mises en œuvre dans les pays ayant le plus progressé aiderait à inspirer et guider les ministres réformateurs.

2. Rendre les données PASEC plus utiles pour la planification

Les planificateurs de l’éducation dans les pays du PASEC allouent environ 14,5 milliards de dollars par an entre des priorités concurrentes. Les données PASEC les aident à prendre de meilleures décisions en fournissant des informations diagnostiques sur les endroits où les apprentissages ont lieu et ceux où ils font défaut. Mais l’utilité des données dépend de la manière dont elles sont présentées et diffusées.

La désagrégation infranationale est ce dont les planificateurs ont besoin. Les moyennes nationales indiquent à un ministre si le système s’améliore globalement, mais ne disent pas à un directeur régional où cibler les ressources. Plusieurs pays du PASEC ont demandé des échantillons plus grands pour permettre des analyses infranationales, et c’est là que les données PASEC deviennent directement exploitables pour la planification. Le compromis est le coût : des échantillons plus grands coûtent plus cher. Le PASEC doit être stratégique quant aux endroits où la désagrégation infranationale apporte le plus de valeur, plutôt que d’élargir uniformément les tailles d’échantillon. Dans les pays où les disparités régionales sont importantes et bien documentées, comme le Nigeria, même une désagrégation modeste peut améliorer l’allocation des ressources. Dans les systèmes plus petits ou plus homogènes, les estimations nationales peuvent suffire.

Les stratégies d’échantillonnage méritent un réexamen. Dans certains pays, les tailles d’échantillon ont augmenté au fil des cycles, en partie à cause des demandes de désagrégation et en partie en raison de fortes corrélations intra-classes (le degré de similarité des performances des élèves au sein d’une même école), réduisant l’efficacité statistique de l’échantillonnage par école. Comprendre pourquoi ces corrélations sont élevées et si elles reflètent de vraies caractéristiques du système éducatif ou des artefacts de la conception des tests pourrait conduire à un échantillonnage plus efficace sans sacrifier la précision.

La disponibilité et l’accessibilité des résultats comptent. Les équipes de planification sectorielle et les responsables éducatifs de district lisent rarement des rapports techniques de 300 pages. Le PASEC pourrait produire des notes de planification par pays dans des formats accessibles et faciles à utiliser : tableaux montrant les performances régionales, les données de tendance et les corrélations avec des intrants observables comme les qualifications des enseignants ou la disponibilité des manuels scolaires. Ces productions à faible coût rendraient les mêmes données plus utiles.

L’interopérabilité entre le PASEC et les systèmes nationaux de données démultiplierait la valeur. Les systèmes d’information pour la gestion de l’éducation (SIGE) de la région sont souvent centrés sur le comptage des intrants plutôt que sur la mesure des résultats. Les données d’apprentissage du PASEC, reliées aux données administratives des SIGE, créeraient un tableau bien plus riche pour les planificateurs. C’est techniquement faisable et cela a été fait dans d’autres contextes (le Censo Escolar brésilien est relié aux données d’évaluation du SAEB), mais cela nécessite un investissement délibéré dans l’interopérabilité.

3. Stimuler la recherche

Pour de nombreuses questions, le PASEC est le seul jeu de données disponible sur la qualité de l’éducation en Afrique francophone. Cette région produit une fraction de la production de recherche de pays anglophones de taille comparable. Au moins 20 études publiées ont utilisé les microdonnées PASEC 2014 ou 2019, et le rythme s’accélère. Mais la disponibilité des données reste inégale, ce qui limite la valeur de recherche que le PASEC génère.

Le principe est simple : les données PASEC financées sur fonds publics sont un bien public. Les chercheurs signalent des retards pour obtenir les bases de données, une documentation incohérente et un accès restreint à certaines variables. Les bases devraient être mises à disposition dans un délai défini après les contrôles de qualité, accompagnées d’une documentation claire, de dictionnaires de variables et de standards de reproductibilité. PISA met toutes ses microdonnées en téléchargement peu après la diffusion des résultats. TIMSS et PIRLS font de même via le répertoire de données de l’IEA. Le PASEC devrait suivre ce modèle.

Une meilleure documentation abaisserait la barrière à l’entrée. De nombreux utilisateurs potentiels des données PASEC, en particulier les chercheurs basés en Afrique francophone, font face à des obstacles pratiques : des définitions de variables peu claires, des dictionnaires manquants et un codage incohérent d’un cycle à l’autre. Un investissement modeste dans la curation des données — noms de variables standardisés, documentation trilingue, bases harmonisées entre les cycles — élargirait considérablement la base d’utilisateurs chercheurs.

Des partenariats avec les universités régionales pourraient construire un écosystème de recherche. Les données PASEC sont actuellement utilisées principalement par des chercheurs d’institutions en Europe et en Amérique du Nord. Développer des relations avec les universités de Dakar, Abidjan, Ouagadougou et Kinshasa à travers des ateliers sur les données, des programmes de recherche conjoints ou des partenariats de thèse aiderait à développer une communauté de chercheurs africains francophones travaillant sur les données probantes en éducation. Cela sert à la fois le canal de la recherche et la mission plus large de renforcement des capacités.

Un programme de recherche concentrerait l’attention sur les questions à plus forte valeur ajoutée. Plutôt que d’attendre que des chercheurs extérieurs découvrent les données, le PASEC pourrait identifier les questions de recherche prioritaires émergeant de chaque cycle et activement commander ou encourager des études sur ces sujets. Le nouveau programme African Fellows, lancé par le professeur de Stanford Eric Hanushek et la Fondation Yidan, est un partenaire naturel pour ce type de collaboration. Le programme peut mettre en relation les jeunes économistes de l’éducation les plus prometteurs d’Afrique subsaharienne avec des sujets de recherche prioritaires et un financement intégré. Certaines évaluations internationales font cela à travers des subventions de recherche compétitives ou des partenariats avec des réseaux académiques. Le coût est faible par rapport à la visibilité et la pertinence politique qu’il génère.

4. Mieux servir les bailleurs

Collectivement, les bailleurs internationaux canalisent environ 1,8 milliard de dollars par an vers l’éducation dans les pays du PASEC. Les données PASEC sont déjà intégrées dans les évaluations de projets de la Banque mondiale, les documents de programme pays du Partenariat mondial pour l’éducation (PME) et les cadres de projets de l’AFD. Renforcer ce canal signifie utiliser les données PASEC pour informer la conception, le suivi et l’évaluation des programmes.

La rapidité est cruciale pour les bailleurs aussi. Les cycles de projet des bailleurs durent généralement quatre à cinq ans. Si les résultats du PASEC arrivent trop tard dans le cycle du projet, ils ne peuvent pas informer les revues à mi-parcours ou la conception des phases suivantes. Le calendrier de publication accéléré discuté plus haut servirait les bailleurs autant que les gouvernements.

La transparence des coûts renforce l’argumentaire pour le financement. Le secrétariat du PASEC gère de manière centralisée environ 63 % des coûts totaux du programme. Une plus grande transparence sur l’allocation de ces fonds, y compris la part consacrée aux partenaires techniques externes pour la psychométrie et le développement des tests, renforcerait la redevabilité et aiderait à plaider pour un soutien continu des bailleurs. Les bailleurs sont plus enclins à financer un programme qui peut montrer exactement ce que chaque dollar finance.

Développer les capacités techniques internes réduit les coûts à long terme. Une part significative du budget central du PASEC va à des prestataires externes pour l’analyse psychométrique et le développement des tests. À terme, renforcer cette capacité au sein du secrétariat réduirait la dépendance à un soutien externe coûteux, abaisserait les coûts et donnerait aux bailleurs confiance dans la pérennité du programme.

Alignement avec les cadres de résultats des bailleurs. Le PASEC pourrait être plus proactif dans son travail avec les bailleurs pour s’assurer que les indicateurs, les repères et les calendriers de publication s’alignent sur les cadres de résultats des grands programmes éducatifs de la région. Cela ne signifie pas adapter l’évaluation aux préférences des bailleurs, mais faciliter l’utilisation des données PASEC dans les formats dont ils ont besoin.

La question finale : que devrait être le PASEC ?

Les quatre canaux dépendent d’une question à laquelle le PASEC n’a pas pleinement répondu : quelle est la mission centrale du programme ?

Le PASEC est actuellement plusieurs choses à la fois : une évaluation internationale, un programme de renforcement des capacités pour les équipes nationales d’évaluation, un substitut aux évaluations nationales dans les pays qui en sont dépourvus, et un véhicule pour le suivi des ODD. Chaque rôle est précieux. Le problème est que poursuivre tous ces objectifs simultanément à son échelle et son niveau de financement actuels crée des tensions de plus en plus difficiles à gérer.

Si la mission principale du PASEC est de déclencher des réformes et d’alimenter le débat public (canal 1), alors la rapidité et la communication comptent plus que l’exhaustivité. Si la mission principale est de servir d’outil de planification (canal 2), alors de grands échantillons et la désagrégation infranationale se justifient, mais cela fait monter les coûts. Si permettre la recherche est une priorité (canal 3), alors l’ouverture des données et la documentation sont des investissements essentiels. Si servir les bailleurs est central (canal 4), alors l’alignement avec les calendriers et les formats de reporting des bailleurs compte le plus.

Ces missions ne sont pas mutuellement exclusives, mais le PASEC ne peut pas les poursuivre toutes au même niveau d’ambition avec ses ressources actuelles. Les évaluations internationales les plus réussies sont restées focalisées. PIRLS mesure la lecture à un seul niveau scolaire depuis plus de deux décennies, résistant aux pressions pour s’étendre. Il y a quelques années, PISA a tenté d’ajouter un module ménage pour toucher les élèves qui n’étaient plus au secondaire, mais a fait marche arrière après avoir constaté que les coûts et la complexité administrative étaient trop importants ; il a donc recentré son action sur sa mission principale — évaluer les compétences en lecture, mathématiques et sciences des élèves de 15 ans.

Le PASEC, en revanche, est passé d’un seul niveau scolaire et deux disciplines en 2014 à trois niveaux et six combinaisons discipline-niveau en 2025, tout en ajoutant des évaluations des enseignants, des enquêtes auprès des parents et des questionnaires contextuels. Les données sur la qualité des enseignants, les attitudes parentales, leur implication et les dépenses d’éducation sont toutes extrêmement précieuses, mais cela augmente le coût de la collecte et dilue les efforts du programme.

Le PASEC dispose aujourd’hui de deux avantages comparatifs clairs. Premièrement, il est la seule mesure de la littératie et de la numératie au début du primaire qui possède une validité statistique entre pays et dans le temps. L’évaluation orale est intrinsèquement chronophage et coûteuse. Les autres évaluations disponibles ne peuvent égaler les qualités psychométriques et les protocoles d’administration qui rendent les tests oraux du PASEC en 2e année valides pour les évaluations de programmes et le suivi mondial. En l’absence d’un engagement clair en faveur de l’expansion du PASEC, le Comité technique des ODD des Nations Unies a abandonné la mesure des compétences en 2e année pour le suivi mondial des ODD en 2025. Étant donné l’accent mis par les bailleurs sur les compétences fondamentales et le volume important de financement à ce niveau, il existe un argument convaincant pour développer les capacités du PASEC malgré les coûts élevés de l’évaluation orale.

Le second avantage est la couverture géographique croissante du PASEC. Il s’est progressivement étendu au-delà de l’Afrique francophone et inclut désormais l’Afrique lusophone — le Mozambique et São Tomé — ainsi que le Nigeria anglophone. Le Nigeria a été inclus dans le cycle PASEC 2025, ce qui générera les premières données d’apprentissage comparables pour 55 % des enfants d’Afrique subsaharienne. Quiconque cherche le moyen le plus efficace d’étendre la mesure des apprentissages en Afrique aurait du mal à trouver une meilleure stratégie que de s’appuyer sur le PASEC, avec un objectif immédiat d’intégrer le plus de pays d’Afrique anglophone possible au cycle 2028.

Articuler ces priorités et d’autres dans un dialogue avec les banques multilatérales, les bailleurs bilatéraux et les grandes fondations pourrait conduire le PASEC vers une identité plus claire et plus stratégique, permettant de meilleures décisions sur ce qu’il faut mesurer, comment rapporter, combien dépenser et quoi demander aux bailleurs de financer. Cela faciliterait aussi la communication de la valeur du programme auprès des gouvernements et des financeurs.

La voie à suivre

Aucune de ces réformes ne nécessite que le PASEC devienne un programme différent. Elles nécessitent qu’il devienne un programme plus délibéré : plus rapide dans la livraison des résultats, plus ouvert avec ses données, plus stratégique dans sa communication, et avec un soutien international plus clair pour sa mission.

Le calendrier est urgent. Le retrait de l’AFD et de la DDC suisse du financement du secrétariat crée un déficit de financement qui devra être comblé. La Banque mondiale, le PME, la Fondation Gates, les bailleurs bilatéraux et les gouvernements membres du PASEC ont tous un intérêt dans la survie du programme. Mais les bailleurs sont plus enclins à intervenir s’ils voient un programme qui se réforme, qui peut articuler ce qu’il produit et à quel coût, et qui dispose d’un plan crédible pour faire plus avec ce qu’il a.

L’argumentaire économique du PASEC est solide. À neuf centimes par enfant et par an, le PASEC est l’un des investissements les plus rentables disponibles dans l’éducation en Afrique. La question est désormais de savoir si le programme et ses parties prenantes peuvent agir assez vite pour protéger cet investissement et le rendre encore plus précieux.

Les auteurs remercient Jack Rossiter, Luis Crouch, Abdullah Ferdous et Clio Dinthilac pour leurs idées et suggestions.

DISCLAIMER & PERMISSIONS

CGD's publications reflect the views of the authors, drawing on prior research and experience in their areas of expertise. CGD is a nonpartisan, independent organization and does not take institutional positions. You may use and disseminate CGD's publications under these conditions.

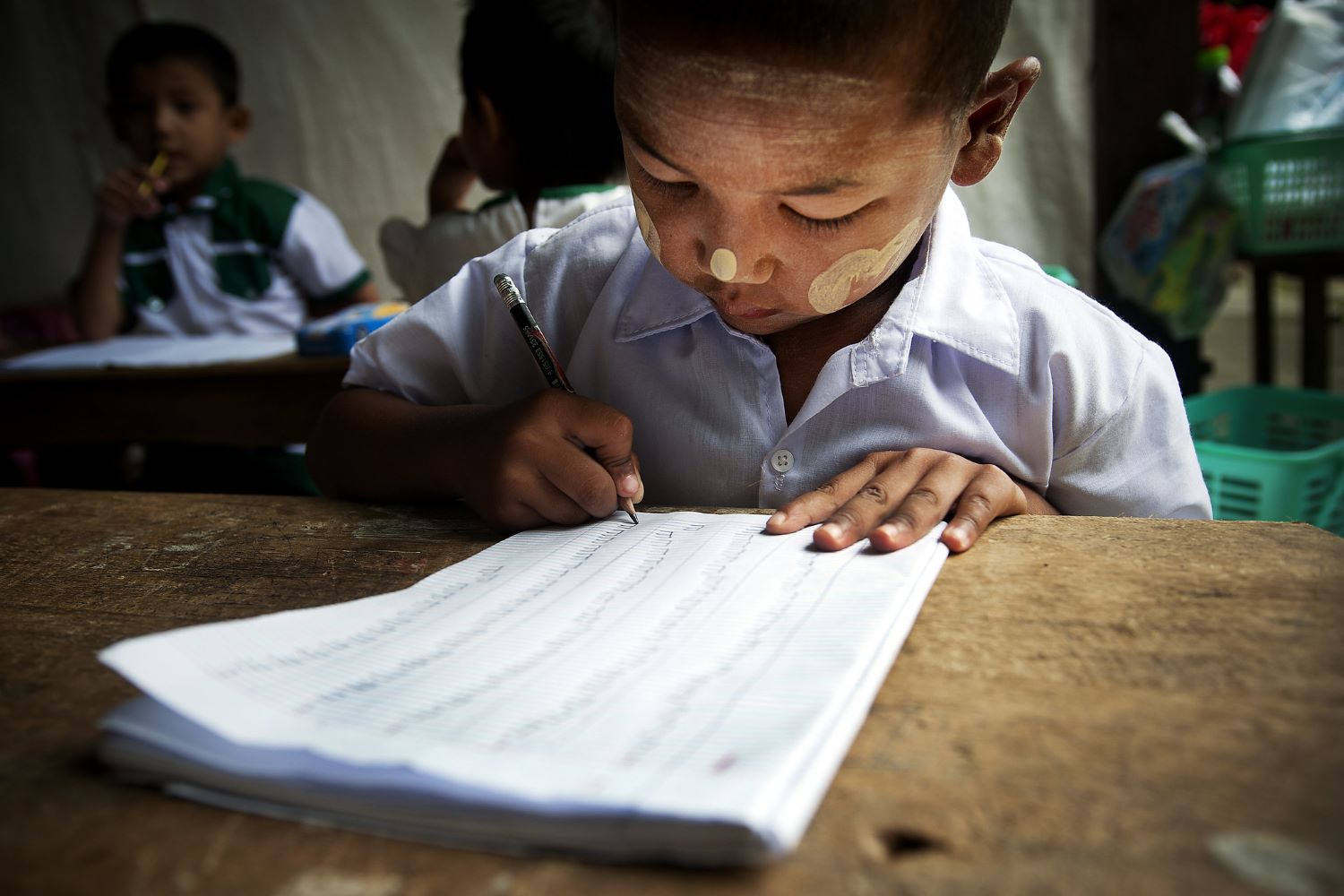

Thumbnail image by: GPE/Rodrig Mbock/flickr