Recommended

In 1981, a former sheep farmer took a one-week crash course in computing, had an epiphany, and teamed up with a car tyres millionaire to form DJ AI.

DJ AI announced a new artificial intelligence platform for sale at $600 that could build computer programs for customers. They named their game-changer “The Last One.” All users had to do was follow a set of screen menus, plug, and play, and bingo, they could do away with all those pesky system administrators and programmers. $6 million was spent marketing this powerful piece of magic on both sides of the Atlantic, sometimes to comic effect.

Such dreams of software building software, literally cutting out the middleman, have recurred regularly since the 1960s in peaks and troughs, but we are still waiting.

From the late 90s onwards, however, new data-driven approaches to automation, particularly so-called deep learning, and the involvement of many of the world’s smartest and most loaded companies, have begun to convince many level-headed analysts that this time it is going to be different. The technologies, we are told, can learn, and so it is about time we paid critical attention to the pace at which they are already and could even further turn upside down the world of work as we know it.

Some of the most elegant attempts to evaluate and categorise the impact of these new capabilities on employment and income inequality can be found in papers by David Autor and his co-authors on labour market polarisation and the effect of computerisation on the market demand for skills.

More than a decade after these papers were written, their core ideas and the schemas they proposed continue to inspire the framing of the issues in influential circles, making them the most cited in their writers’ corpus. The Economist is right to describe Autor’s seminal work as enormously influential.

The elegance and rigorous use of data in these two persuasive treatises are not, however, enough to prevent one major convenient generalisation from weakening their key arguments.

The generalisation in question emanates from the conflation of several different patterns of computerisation with “automation,” the replacement of human actors in the chain of work, which is then used as a proxy for technology diffusion and infusion into various modes of work, following in a tradition that also encompasses Goldin and Katz’s equally elegant formulation of the automation question as one of a contention between returns to skills versus returns to algorithms.

Having taken “automation” as the predominant form in which modern technology manifests itself in the workplace, Autor and his collaborators then proceed to construct a spectrum of possibilities for technology’s infusion: complement, substitute, or bypass (CSB).

In the CSB paradigm, modern technology in the workplace tends to complement super-skilled, high-earning, workers, in complex, adaptive, operations, thereby boosting their productivity and bargaining power; substitute for the contribution of most medium-skilled workers in many routine tasks, thus depressing their wage potential; and bypass low-skilled workers, such as drivers, waiters, and janitors, thus rendering their fate somewhat indeterminate even if their numbers grow.

It is not difficult to see why Autor et al.’s extensive use of crosswalking across census-based industrial classification schemes and the Dictionary of Occupational Titles should encourage this neat stratification. Once something is coded, it acquires a hardness that confers rigour and opacity.

Anticipating criticism, they include a caveat about the limitations of task coding:

“These include limited sampling of occupations (particularly in the service sector), imprecise definitions of measured constructs, and omission of important job skills. These shortcomings are likely to reduce the precision of our analysis.”

The composite effect of Autor et al.’s tri-modular scheme, limitations notwithstanding, is a structure of income inequality characterised by a mega-rich 1 percent pulling away from the rest, a stagnant bottom half of the population with converging income patterns, and a highly beleaguered middle class, many at risk of falling into the bottom half.

Workplace automation isn’t new—and it isn’t the whole story in job loss or creation

As already conceded, we have here a rather elegant simplification of some seriously complex stuff, and the deft use of quantitative analysis does it even more credit. Except that most digital and related types of technology adoption in the workplace are not about automation at all. Human replacement is rarely the shaping force of technology impact on business strategy generally, or HR policy in particular. Far more subtle, sublime, and insidious forces are at work. In that sense, the focus on automation when evaluating technology’s impact on work and the workplace can become an expensive, navel-gazing distraction.

Contrary to popular imagination, automation in the workplace is not some modern-day development composed chiefly of hardware, robotics, and human-cognition level embedded algorithms. Instead, it is an old phenomenon consisting primarily of business productivity software deployment in the forms of enterprise resource planning (ERP), customer resource management (CRM), and human capital management (HCM) solutions. And however far back one goes, process control and risk management have always competed with increased flexibility for priority in the business case for these systems.

Still, the track record of these automation endeavours has been rather lacklustre. More than 80 percent of business process and workflow automation efforts and 85 percent of Big Data initiatives typically fail. Of course, attempts at hardware-intensive automation, rarity aside, fare no better.

Owners, investors, and senior managers, all too aware of this harsh reality, have tended to invest in a much more diversified suite of digital solutions to address risks and opportunities in the work environment and in the marketplace.

These have been the principal tensions, not whether to automate or not to automate. And because automation is hardly some teleological endgame for technology adoption, any analysis that commences by asking whether job losses due to automation shall happen at this rate or the other is already on the wrong footing. Job losses and job creations in today’s digital environment do not happen along an axis defined by ability/willingness to automate; they occur as a consequence of a complex interplay between technology and business objectives with no pre-set outcomes based on specific trends in technology.

Yet, so besotted are CSB proponents with the “discrete task automation via discrete machine capability” motif, having taken for granted that the success of this action is what drives computerisation, that they even go further to attribute the patterns of substitution and complementation they claim to perceive to the “declining price of computer capital.” The “discrete-centric” model of such analysis is reflected in the intention behind the census question sample in the data appendixes of Autor et al.: “Do you use a computer directly at work?”

Is a “computer” as used here a modern cash register, point of sale terminal, or x-ray visual display unit? Or even a modern smartphone? Such a traditional view of the form factors in which computerisation manifests in the workplace leads one to question whether it is even possible at all to view computerisation in such a limited fashion and still theorise about its impact on any domain cogently.

At any rate, there isn’t much foundation to the causal chain of reduced cost of computerisation and its presumed effect on automation. There is no evidence that “computer capital” prices, if “computer capital” is to be taken as a substitute for “digital technology investments,” or for the “IT budgets” of firms, the most relevant dimension of the workplace transformation and employment dynamics debate, have fallen at all.

In fact in the software industries of the United States (where Autor et al. collected basically all their data), producer price inflation has been on a consistent upward climb. It is completely unsafe to use the often-referenced fall in prices of electronics, and in particular computer chips (the root cause of FLOP, bandwidth, DNA sequencing, and similar “discrete capability” price deflation of recent years), as a proxy for “computerisation” in any serious analysis of industry absorption of technology in today’s world of convergence.

The power of complexity

As Paul Strassmann, the former CIO of NASA whose insights into many of these questions are quite profound, has pointed out, the use of deflationary indexing of electronic prices to approximate the trend in costs for enterprise IT is a fundamentally flawed approach, and one that leads to overestimations of IT’s contribution to output growth and productivity. The price of electronics has rarely had much to do with IT budget growth or declines in the modern enterprise.

For the simple reason that computerisation in modern industry is a composite affair involving complex bundles of hardware, software, and services, with software investments being the most progressive cost component due to the ongoing virtualisation of infrastructure.

Thus, whilst the prices of discrete technologies (“distechs”) are falling, the prices of viable technology systems (VTS) are rising on account of the deepening link between viability and high complexity. This is a critical point.

Most technologies in the form experienced by the consumer nowadays are evolving very rapidly away from the bounded offerings they used to be in the last century. “Evolve” is an apt word, because newish technologies do continue to evolve even when in the hands of the consumer due to increased “servification,” “convergence” of multiple technology paradigms in unit offerings, and the accelerating “connectedness” of the user experience.

It is not too difficult to appreciate why autonomous cars, citing but one popular example in the CSB literature, are not really cars in the classic automobile sense at all. Or to think of them as complex composites of satellite, mapping, radar, and data repository networks guiding the navigation, perception, insurance management, regulatory pace-catching, and risk control elements of this complex system. As they scale, these complex factors don’t necessarily scale linearly with them, leading to lagging costs and a degree of complexity that does not come cheap.

Simplicity is certainly crucial to lower costs of adoption. High IT installation costs in the healthcare sector are rarely mentioned when commentators lament the delay in digitisation to save costs, seemingly oblivious to the irony of the world’s most digitised healthcare system[1] being accused of not spending enough on technology. Yet without appreciating the cost of complexity head-on, as the startups that rescued Obamacare’s healthcare.gov did, progress is impossible.

To engage and contain complexity effectively, however, requires multiple innovators to tackle not merely the production process (the domain of traditional co-innovation and the strategic supply chain collaboration literature) but also the selling and customer support process. I have termed this imperative “fractal simplicity”—the navigation of complexity at scale through patterns of collaboration that reproduce themselves through loose networks. (I explore the concept of fractal simplicity in a recent related CGD note.)

Most free-to-adopt technologies are, in fact, exemplary fractal composites. Google is a seamless amalgamation of the information pooling efforts of millions of agents, as is Facebook. At any level of scale, the power of the core network reproduces their essential character. That is why these companies do far less well when they try to replicate their successes in distech domains where, for whatever reason, their embrace of fractal simplicity has been lukewarm.

An obvious derivative of the simplicity-complexity argument is the realisation that new technologies create considerable moral seepages and ergonomic externalities. Fraud is usually rampant, easy to scale, and hard to anticipate, exacerbating control and risk costs. One reason for this is related to the very point made earlier: firms are under intense pressure to rapidly select emerging distechs, often before they have matured or even fully understood, and couple them together with various other non-tech elements into complex composites. The new technology production process thus proliferates gaps, security loopholes, and weak links in the chain.

At one level, the moral seepages create growing task intensity as VTS owners and operators invest substantially in qualitative advances that do not create revenue and quite often create only a few jobs. At another level, the ergonomic externalities trigger over time (as containment fails) new control layers, partnerships, public diplomacy, customer appeasement, and so on, contributing to task extensity, which usually does create jobs, though not always productivity-enhancing ones.

Confusion arises because the task extensity and task intensity dynamics of VTS platforms in the new economy do not necessarily mirror the behaviour of distech productivity expansion.

In that regard, commentators oversimplify the true forces at play with statements like this: “The 18th century spinning jenny reduced the cost of production, by making it possible for one worker to weave as much cloth as eight workers did prior to its invention.” Such a view rightly describes a world where technology progress was driven predominantly by largely discrete technology forms like spinning jennies and internal combustion engines, which could be assembled under one producer’s full control into relatively value-autonomous units like transport fleets and textile mills. The world of digital convergence is dramatically altering that picture.

Take police body cameras. Their introduction was fuelled by a demand for greater social control, risk management, and improvements in the quality of law enforcement outcomes. The ergonomic externalities have nevertheless been rampant, as requests for footage, the need for legal cover, and necessity for policy keep-up combine to force various intensive and extensive measures. Whilst the net result has been more job creation, it is also clear that superior technologies facilitating access, retrieval, confidentiality, facial recognition, and anti-tampering are required to convert these cameras from distech items/forms into true VTS platforms, with uncertain impacts on productivity.

We see the same with the automation of tollbooths. Over time, what was initially conceived of as simple mechanical contraptions requiring basic electronic payment system integration with motion sensors has been quickly found to demand complex upgrades into video tolling platforms that must be linked with law enforcement databases, facial recognition, and so on.

Starting from the simple control-risk need to read number plates, it was soon discovered that doing that well [check page 24 for a fascinating list of failure points] requires other integrations. And now we find that video tollbooths are more expensive than human-manned ones. The benefits of deploying emerging tolling technology can no longer be explained simply by a calculation of how much costs can be saved if the tollbooth operator can be replaced by a mechanical contraption but instead by a whole host of crime-fighting, fraud-mitigating, traffic-alleviating, and confidence-building objectives. That costs and complexity have had to escalate is merely the price of technology-driven “progress.” If a company underestimates this, it gets egg on its face, like Daimler.

Insights on technology adaptation in the workplace

Some important insights emerge.

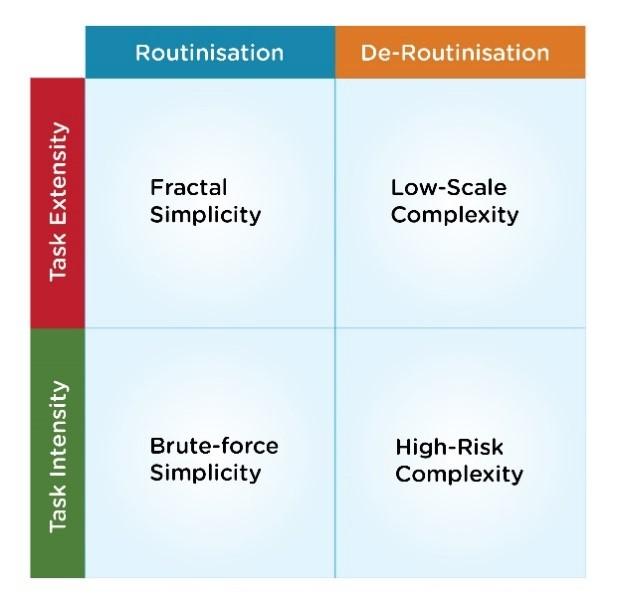

First, a sounder framework for appreciating the forces acting on technology adoption in the workplace would be one that combines the task intensity and extensity effects of technology’s tendency to spill over into new arenas of concern with a dynamic description of how this replaces the automation-focused view present in the discrete-centered CSB approach.

I attempt this by proposing that technology adoption in the digital convergence world of VTS platforms operates along two axes of tension:

-

Attempts to routinise and standardise complex operations, more to deal with the moral hazards and seepages of new technologies than to replace workers.

-

Attempts to de-routinise simple tasks in order to reduce the ergonomic externalities created by a tunnel-vision compositing of discrete technologies to address system-level requirements.

Figure 1. The tensile axes of simplicity and complexity driving computerisation

Firms strive to routinise and standardise tasks in order to improve the efficiency so crucial for scale. When they start to be successful, they create room on the leading edge of their operations for advanced technology. The precise species of advanced technology depends on a number of factors—and may or may not be automation-focused. The primary purpose, however, is usually to support enhanced task intensity, which, in the context of routinisation, has tended to be automation. Automation should thus be seen as but one common opportunistic dividend of the drive for scale.

Second, because of the high interconnectedness and the many interdependencies of the integrated form factors converging from multiple nodes to create modern viable technology systems, however, the drive for scale often manifests as brute-force simplicity.

Externalities then ensue, which forces companies to de-routinise various ergonomic layers of the technology system, in turn driving task extensity to contain emerging risks and keep hazards under control. The use of advanced technology in this context is almost always the opposite of automation, manifesting as it does in intense levels of managerial control, amorphousness, improved dialoguing tools, hierarchical protocols, and so on. When new road toll transponders get hacked, new forms of human overlay and systematics may become necessary, often intertwined by additional investments in auxiliary technologies.

A few case studies drive home the point

A few additional well-known examples might be helpful here.

Trucking

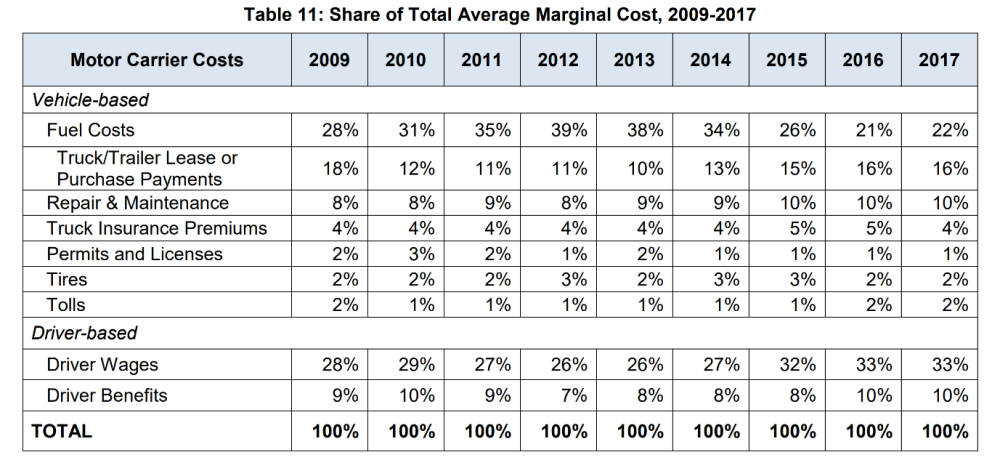

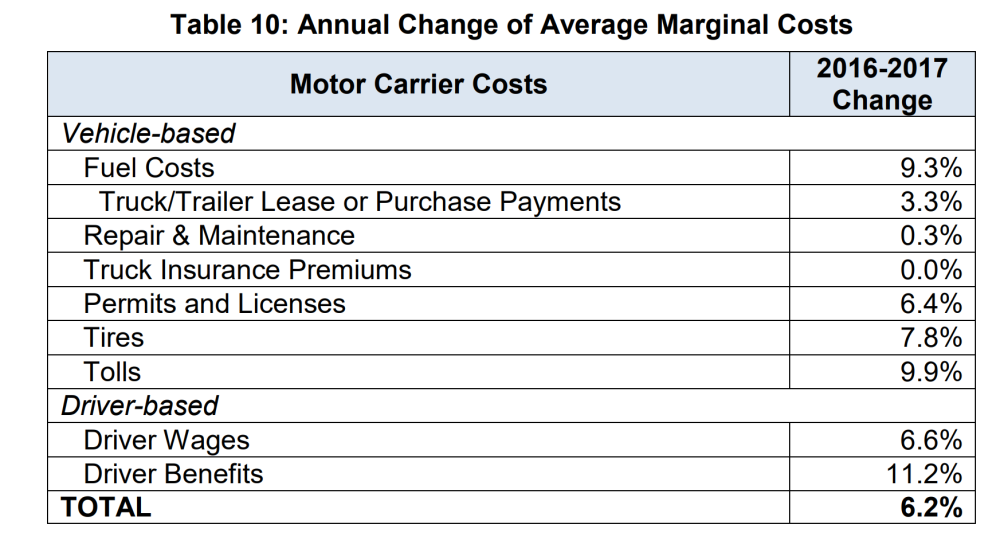

Long-haul trucking is a human-replacement opportunist’s dream come true. A better posterchild for automation one cannot find. The two tables below extracted from the 2018 American Transportation Research Institute Analysis of Trucking Costs report show that human labour costs account for more than 40 percent of total running costs in long-haul trucking. The same report also laments a shortage of 50,000 drivers, set to grow to 174,000 drivers in 2026 if trends continue. High labour cost driven by the shortage of qualified personnel—perfect combo for automation, surely?

Figure 2. Extracts from ATRI 2018 report highlighting labour cost impact on trucking operations in the United States

And indeed, within the autonomous vehicle segment of the AI boom, trucking automation is a big trend. Startups such as Peloton, Nikola Motor, and Embark have become darlings of the tech blogosphere. TuSimple, a US-Chinese startup, is one of the most aggressive pushers of the trend. It offers a “complete solution,” from mapping, localisation, motion planning, all the way down to a proprietary night vision system that it has co-developed with Sony.

The startup has to do that because, as its CTO lucidly attests, there is no such thing as a compact or discrete AI capability. Instead, what you get is “a crew of people each tending in their own way to a big, room-sized monster. The technology is just not mature enough that it can solve all the logistics of autonomous driving by itself.”

The first point to note here is that when people equate AI to automation, they are committing the same intellectual sin that led to people seeing nanotechnology as a product well until it diffused into various viable technology systems. AI is a complex mishmash of various intermediary capabilities, which if blended well together can lead to various advanced technology effects.

Second, and following from the above, choosing human replacement as the endgame is but one of many possible pathways to harness those effects. The obvious pathway usually associated with human replacement is cost rationalisation and profit. If that frame is used for trucking, we only need to look at the two tables above to conclude, as does PWC, that nearly half of the industry’s operating costs might be eliminated by AI-enabled automation simply by removing the labour costs and assuming the “falling computer capital costs” paradigm.

But we already know that we can’t. A self-driving truck is not a seamless substitute for a normal truck. It is not even an equivalent substitute in the way that Ford’s automobiles were for horse-drawn carriages. In a world where products are better described as product sprawls, such a substitution is really system for system.

After one has noted the relative proportions of costs associated with capital investments and maintenance in the above tables, one must approach the cost benchmarking exercise not as one would in the distech domain but as one would in the digital services and IT contracting world, where the appropriate term of art is: total cost of ownership. If one does this, one is unlikely to be surprised that substituting the normal truck for the self-driving one would very likely double capital costs; shorten the lifecycle of major components (involving some fascinating dynamics around declining “salvage value” of self-driving cars); alter the insurance model; transform the repair and maintenance cost profile; and design a new interface with ports.

Costs in this trucking model would effectively be double, not half, of the amount in the “normal” model (much of it driven by the “technical debt” imposed as a result of the evolutionary attributes of the emergent viable technology system). Whether or not, on a net basis, more jobs would be created is completely indeterminate, but the important point here is that this would hardly be the primary occupation of any serious investment analyst evaluating the prospect of a complete transformation of a business model from a compact capital asset and labour-intensive operation into a loose platform of interfaces interconnecting ports, satellites, maintenance subscriptions, service contracts with data companies and so on.

And that is precisely why major OEM (original equipment manufacturers) firms in the trucking industry are not prioritising human-replacement automation. Players like Scania are focusing on the “creation of product-related services such as preventative maintenance, fleet management and remote monitoring and control of the vehicle.” This “expand customer value,” instead of “rationalise cost by retrenching labour,” strategic worldview follows naturally when one considers the transformation of the product space involved in the advancement of automotive technology, as discussed earlier, and thus take into accounts realities like: ”The cost of replacing e.g., an electronics unit is basically equivalent to hiring and paying two to three repair workers for a long period of time. Up-time is generally traded for the potential of saving money on repair work.”

If one is going to integrate all manner of not-so-cheap components to create a product sprawl, with indeterminate labour cost-cutting effects, one might as well broaden their horizon of what various enterprise objectives can be bundled together to create value. Instead of taking driver shortages as a given, one may decide to reduce driver churn, increase driving comforts, and enhance productivity.

That Scania and many OEM manufacturers are harnessing advanced technologies, including AI and Big Data, to explore all manner of value-creation possibilities instead of prioritising AI is merely sound business.

Social media

But not even “value creation” is all-encompassing. Risk control and externalities management are equally expansive.

When Facebook tries to better handle fraud or political misuse of its platform through enhanced data analytics, then starts proposing constitutional courts—or when Uber deploys its data scientists to fight scams, but in the next moment seeks to build new APIs for municipalities and mayoralties to address concerns about the adverse effects of its technologies —these large tech platforms are operating along the abovementioned axes of tension. Like Volkswagen, their integration of complex new technologies is rarely driven by the automation logic painted by CSB proponents, but more by competing forces to simplify for scale or complexify to contain risks.

In the case of AirBnB and its privacy leakages, such tensions in its navigation may manifest in liability generation when in seeking to assure the autonomy of its “host partners,” it becomes evident that such autonomy may also enable racial discrimination. In which case, its deployment of AI-enabled civic technologies, as indeed any anti-discrimination algorithm should be regarded, involves multidisciplinary tech and non-tech teams tasked with making strides in a domain wracked by technical uncertainty demanding considerable de-routinisation.

No forces of destiny

Alarmist estimates prophesying losses of between 40 percent and 85 percent of jobs in the near future are, in the above light, neither right nor wrong, since the issue is hardly about estimation accuracy. They are simply wrongheaded because they ignore the much more fascinating, and closer to ground truth, dynamics of technology infusion and adoption.

There are no cosmic, inexorable, forces of destiny at work. Whether jobs are created or lost depends on the degree to which companies can reconcile these two forces through an effective navigation of different technology forms and human factors.

“Effective navigation” is, unfortunately, easier said than done. It entails the creation of complex ecosystems capable of turbocharging individual firm growth whilst effectively diffusing the risks among co-innovators jointly owning the customer and public relationships. No wonder, then, that the picture is tantalisingly mixed.

A simple case study in contrasts that illustrates the power of creative ecosystems is the subtle competition between app-stores and freelancer communes, on the one hand, and the 60-year attempt to develop AI systems that can generate code, on the other hand, as was hinted at the beginning of this essay.

In barely a decade, app stores, heavily fuelled by freelancer communes, have grown from literally nothing to $122 billion in revenues, more than 10 million apps, and nearly 500 billion downloads. In six decades, no seriously functional AI software building software platform has been successfully commercialised from the various academic and corporate laboratory silos that continue to fixate on the goal. The power of communes and ecosystems in driving growth whilst managing complexity is beyond debate. They enable incredibly simple user interfaces whilst containing, even at the highest scale, the complexity of enabling commercial production and the exchange of millions of complex products by a wide variety of makers. Such fractal simplicity may thus be regarded as the product of deceptively simple networks.

Sadly, developing countries, especially those in Africa, are doing badly in putting together these ecosystems. The majority of African businesses embracing innovative practices, business models, and technologies are too often wrestling with too many scale and risk management tasks. The problem, therefore, is hardly one of automation taking jobs away, but of Africa’s inability to harness opportunities in the digital sector in ways that promote viable technology systems capable of creating jobs.

What about developing countries?

Some commentators have cast the issue of the impact of automation on Africa in the CSB mode by citing the apparent high concentration of automatable, and therefore easily substitutable, jobs.

But the supporting analysis is not tenable. In the case of African agriculture, for instance, the framework immediately breaks down. The average acreage of an African farm is about 2.5 hectares, compared to 176 hectares in the United States. Automation in such a context is basically meaningless since quite often these tiny farms are tended by families whose members, some remunerated in kind rather than cash, have few incentives or capacity to replace themselves, nor are there any economies of scale or scope to tempt them into it.

The ongoing desertion of farming in some African countries is driven by the sheer implausibility of productivity under these circumstances—not by automation.

Should automation override the long-standing, complex, and dysfunctional land tenure systems in most African countries, it may well bring more land under cultivation, facilitate commercial-scale farms, and justify more automation on these new farms. But that should see a net increase in jobs, ignoring the counterfactual of subsistence farmers who are running into the towns for every reason other than automation.

As far as the nexus between automation and premature de-industrialisation is concerned, it is safe to note that many developing countries, especially those in Africa, have already undergone massive shedding of large-scale, Fordist, production-line jobs. Take textiles for instance, where Africa has already lost nearly a million jobs over the last decade (that is, about 80 percent of the historical levels) to foreign competition, not automation.

The question thus turns on whether potential future inbound (particularly, “displaced from Asia”) jobs would not come to Africa because high automation in incumbent locations makes such relocation unnecessary.

Whilst there may be some basis to this fear, it is highly speculative, and at any rate, Africa has already embarked on a very different path to increasing manufacturing jobs by embracing modular manufacturing and efficient small-batch production through support from Chinese supply chain strategic collaborators (I use the term Alibaba industrialisation to describe this trend). This phenomenon has become a growing focus of Chinese official policy.

The other mistaken CSB assumption is the notion that human-technology complementation is a purely upper end of labour pyramid play. The bypass logic, whereby non-routine manual jobs simply evade automation and are therefore left to fester in low-wage stagnation regardless of absolute growth in the numbers of such jobs, is not wholly tenable.

Waiters, truck drivers, and janitors can all do with good technology that makes their work contribute more value.

Waiters, for instance, could use tools that make it easier to communicate with the kitchen while still interacting with clients. Waitresses could also be continually fed with real-time data from table-top surveys around the restaurant providing them with a sense of customer satisfaction.

Instead of Inamo using virtual menus to displace waiters, whilst still delivering ordered dishes manually, they could instead use them to help waiters learn more about their customers’ preferences so that they can help them make better selections. Perhaps, Inamo’s rating would then rise higher than its current 3.5 on TripAdvisor because its food wouldn’t strike diners as so mediocre.

The excuse that technology isn’t making as much impact in the blue-collar end of the job market because the tasks cannot yet be automated represents a mere failure of imagination that is neither natural nor sustainable. Automation is anything but the be-all and end-all of computerisation. This point is particularly poignant for places like Africa where the services sector has long attracted informal workers with low education and therefore suffered in productivity terms.

Human cognitive augmentation does not require complex AI. In fact, the simpler the better in most instances. Mechanics in West Africa with barely 6 years of public education have been recorded by ethnographers using YouTube to assist them diagnose defects in increasingly electronicised cars.

Likewise, big telecom networks in Africa and fintech companies are exploring how to do more with the giant and still growing network of agents they have precipitated by equipping them with apps to sell insurance, cable subscriptions, basic loans, and so on.

There is no reason, therefore, why the current mass education paradigm, however much in need of improvement, cannot supply the basic foundations for retraining through superior skills acquisition interfaces. The policy implication of this fact is all the more striking giving how many unfilled jobs exist even in Africa.

To emphasise that the strict demarcations of the labour spectrum by the CSB framework is limiting, it is important to revisit the much-discussed issue of bank tellers.

What new tech has enabled banks to do is to blend teller and customer service roles whilst keeping the blended roles entry-level. The technologies for credit assessment and the likes used by these new workers do not fall into the strict complement-substitute-obviate spectrum, because even where we can describe the situation as one of complementation, it does not happen at the upper end of the skills spectrum. Such operational models, in seeking to transform branch banking into a viable technology system, have redefined roles, fragmented some tasks, and introduced new manual steps, such as assisting customers use apps and troubleshoot poor ATM interfaces. More importantly, this is happening in both developed and developing countries.

What we have sought to demonstrate in this brief essay is that the nature of technology incidence on the work spectrum is poorly captured by a focus on strict differentiations across complementation, substitution and bypassing effects. If a macro-framework is needed to help engage with the trends then it is best to look at how the nature of viable technologies for the new economy is changing from compactly integrated offerings into convergent network systems, prompting producers to explore more effective means of expansion without losing control and credibility.

The process through which technologies become viable leads to "product sprawl," a spread of features and counter-features that widen the cross-section of the technology's interfaces. That tension-fraught process of "routinise and de-routinise" we have already described at length is the dominant management headache in technology management and digital business model strategy today.

Product sprawling is also driven by the fact that the true contours of customer, partner, supplier, and enabler preferences are revealed over time and become stable much more slowly than was the case in the past. The process of learning in analysing value in product sprawls follows a pattern that is substantially different from discrete technologies.

Easy dichotomies between blue-collar and white-collar, developing and developed, and complementation and substitution, all lack explanatory power to account for the rich tapestry of phenomena unfolding before our eyes in the global world of work.

In this alternate framing, the choices and decisions of policymakers, thought leaders, and business pioneers, as they play their respective roles in shaping the convergence and network effects, have considerable influence in the emergence of ecosystems that can harness this tension for overall economic growth, thereby creating, on a net basis, more productive jobs even as the disintermediation of classical firms built on monopolising value from discrete technologies lead to some job losses.

In short, given that the machines are not so easy to ride, there is wisdom in investing in the right analytical saddles and stirrups before mounting the bandwagon.

Bright Simons is President of mPedigree and a member of CGD’s Study Group on Technology, Comparative Advantage, and Development Prospects.

This note is part of a special series authored by members of CGD’s Study Group on Technology, Comparative Advantage, and Development Prospects. Learn more at cgdev.org/future-of-work.

[1] The US spends roughly $185 per capita per annum on healthcare IT versus $30 per capita for the European Union.

Topics

CITATION

Simons, Bright. 2019. The Machines Are Not So Easy to Ride: Another Take on Automation. Center for Global Development.DISCLAIMER & PERMISSIONS

CGD's publications reflect the views of the authors, drawing on prior research and experience in their areas of expertise. CGD is a nonpartisan, independent organization and does not take institutional positions. You may use and disseminate CGD's publications under these conditions.