Mobile phone surveys are fast, flexible, and cheap. But, can they be used to engage citizens on how billions of dollars in donor and government resources are spent? Over the last decade, donor governments and multilateral organizations have repeatedly committed to support local priorities and programs. Yet, how are they supposed to identify these priorities on a timely, regular basis? Consistent discussions with the local government are clearly essential, but so are feeding ordinary people’s views into those discussions. However, traditional tools, such as household surveys or consultative roundtables, present a range of challenges for high-frequency citizen engagement. That’s where mobile phone surveys could come in, enabled by the exponential rise in mobile coverage throughout the developing world.

Despite this potential, there have been only a handful of studies into whether mobile surveys are a reliable and representative tool across a broad range of developing-country contexts. Moreover, there have been almost none that specifically look at collecting information about people’s development priorities. Along with Tiago Peixoto, Steve Davenport, and Jonathan Mellon, who focus on promoting citizen engagement and open government practices at the World Bank, we sought to address this policy research gap. Through a study focused on four low-income countries (Afghanistan, Ethiopia, Mozambique, and Zimbabwe), we rigorously tested the feasibility of interactive voice recognition (IVR) surveys for gauging citizens’ development priorities.

Specifically, we wanted to know whether respondents’ answers are sensitive to a range of different factors, such as (i) the specified executing actor (national government or external partners); (ii) time horizons; or (iii) question formats. In other words, can we be sufficiently confident that surveys about people’s priorities can be applied more generally to a range of development actors and across a range of country contexts?

Several of these potential sensitivity concerns were raised in response to an earlier CGD working paper, which found that US foreign aid is only modestly aligned with Africans’ and Latin Americans’ most pressing concerns. This analysis relied upon Afrobarometer and Latinobarometro survey data (see explanatory note below). For instance, some argued that people’s priorities for their own government might be far less relevant for donor organizations. Put differently, the World Bank or USAID shouldn’t prioritize job creation in Nigeria simply because ordinary Nigerians cite it as a pressing government priority. Our hypothesis was that development priorities would likely transcend all development actors, and possibly different timeframes and question formats as well. But, we first needed to test these assumptions.

So, what did we find? We’ve included some of the key highlights below. For a more detailed description of the study and the underlying analysis, please see our new working paper. Along with our World Bank colleagues, we also published an accompanying paper that considers a range of survey method issues, including survey representativeness.

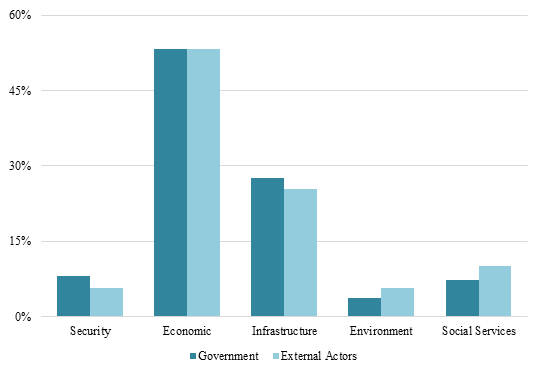

1. Executing Actors Rarely Affect Citizens’ Development Priorities

We find very little evidence that respondents cite different development priorities in connection to what their government or external partners should do. There are a few exceptions, like Ethiopians raising social services more frequently as a priority for external partners than for their own government (14 percent of responses versus 7 percent). Nonetheless, these instances were rare and almost never changed the rank order of priorities within the focus countries.

Figure 1 – Country Development Themes by Cited Executing Actor, Mozambique

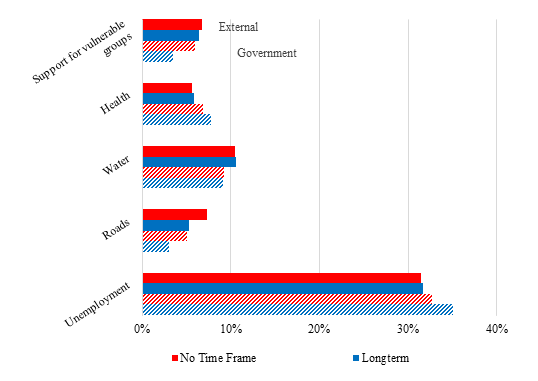

2. Different Timeframes Only Modestly Impact Response Patterns

We find little evidence that development priorities change based upon different stated timeframes. In addition, the priorities that exhibit possible timeframe effects appear as second- or third-tier issues, with each accounting for only 2 percent of country-level responses on average. The only exception is health in Ethiopia, which respondents cite more frequently as a long-term priority for external partners.

Figure 2 – Timeframe Effects on Most Popular Development Priorities, Zimbabwe

3. Close-Ended Question Response Options May Be Sufficient

We were unable to definitively assess whether respondents provide demonstrably different answers depending on the questionnaire format (e.g., closed or open-ended). This is due to high survey attrition rates and the significant number of unusable responses for the open-ended survey samples. However, the small samples do suggest that our close-ended response options adequately captured people’s development themes and priorities.

Overall, we find that mobile phone-based IVR surveys may be a promising tool for engaging citizens about their top development priorities. Moreover, our findings suggest that a single survey instrument may be adequate for different actors’ usage, such as bilateral donors, multilateral agencies, and national governments. However, our results also suggest that appropriate caution is still required. This is particularly the case for analyzing less frequently cited priorities that may be more prone to timeframe or executing actor effects. In this manner, mobile surveys should be viewed as a flexible, low-cost supplement to more comprehensive household surveys — not as a permanent replacement.

**Explanatory Note: The Afrobarometer surveys ask the following question — “what is the most pressing problem that the national government should address?” The survey instrument does not include a separate question on priorities for external partners, such as development agencies and NGOs. While Latinobarometro surveys also ask about respondents’ most pressing problems, they do not reference either the respective national government or external actors. Therefore, the potential sensitivity concern referenced above relates more to the Afrobarometer survey data and less to the Latinobarometro data.

Topics

DISCLAIMER & PERMISSIONS

CGD's publications reflect the views of the authors, drawing on prior research and experience in their areas of expertise. CGD is a nonpartisan, independent organization and does not take institutional positions. You may use and disseminate CGD's publications under these conditions.