In our recent CGD report Schooling for All: Feasible Strategies to Achieve Universal Education, we make the case for (and against) specific public investments in education in low- and lower-middle income countries. We argue for prioritizing not just “what works”, but “what scales.”

When it comes to what actually works at scale, we characterised two sets of policies:

- Type A policies that are hard to get right at scale—whilst perhaps more effective when done well, they require skilled judgment and difficult behaviour change, and

- Type B policies that are hard to get wrong at scale—with perhaps more modest effect sizes, but which are more logistical, and require less discretion.

So, is edtech a type A or type B policy?

School closures during the COVID crisis led many to hope that edtech could help to keep children learning. The application of technology can, in theory, remove at least some of the need for skilled human judgement (making edtech a type B or scalable policy). But deploying a new adaptive personalised learning software can require skilled project managers and coaches to keep children engaged (making it instead a type A policy, hard to scale). In Schooling for All, we gave level-adapted software or edtech as an example of an intermediate policy somewhere between type A and B, where more empirical evidence is probably required before we can judge the difficulty of implementation.

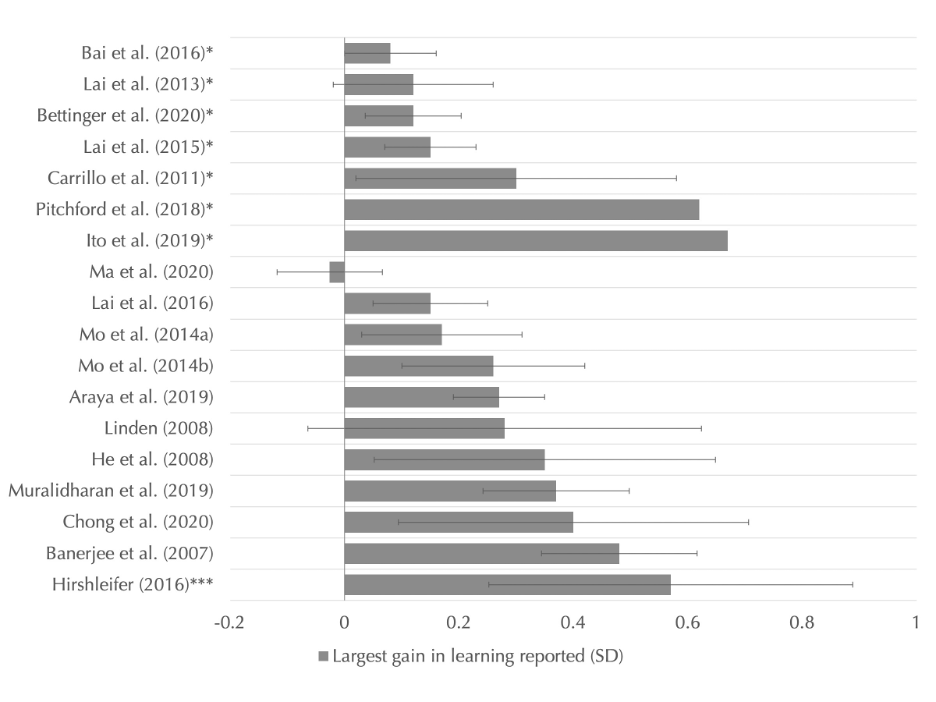

I recently revisited this question with data from Daniel Rodriguez-Segura’s 2020 systematic review. He reviewed 67 studies evaluating the use of technology in education, with an experimental or quasi-experimental research design, set in a developing country.

He classifies edtech into four categories; self-led learning software, improvements to instruction, access to technology, and technology-enabled behavioral interventions. I chose to focus on the first two that are focused on learning, and compare the scale of interventions to their effect sizes, in a similar way we do for other interventions in Schooling for All.

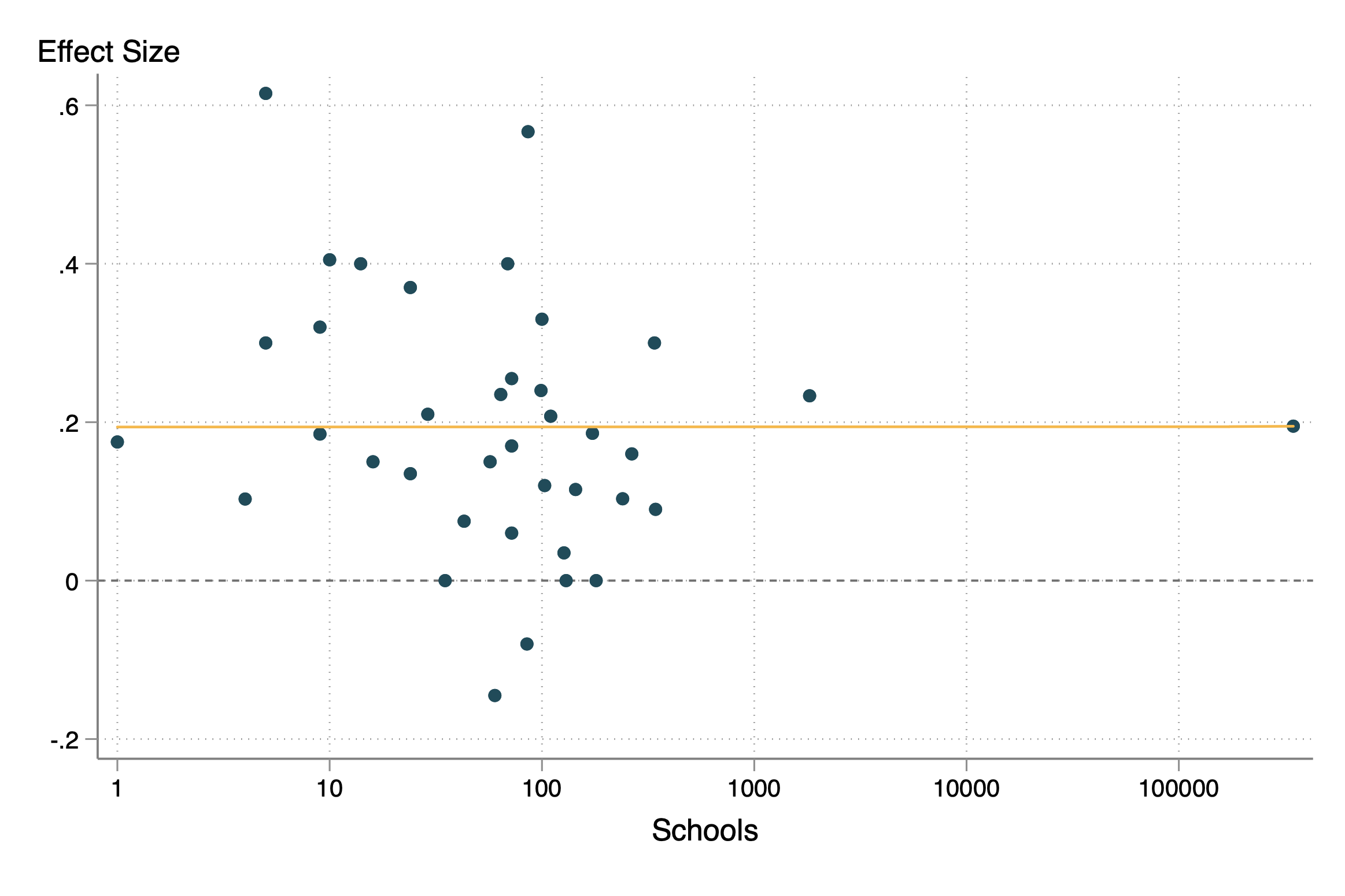

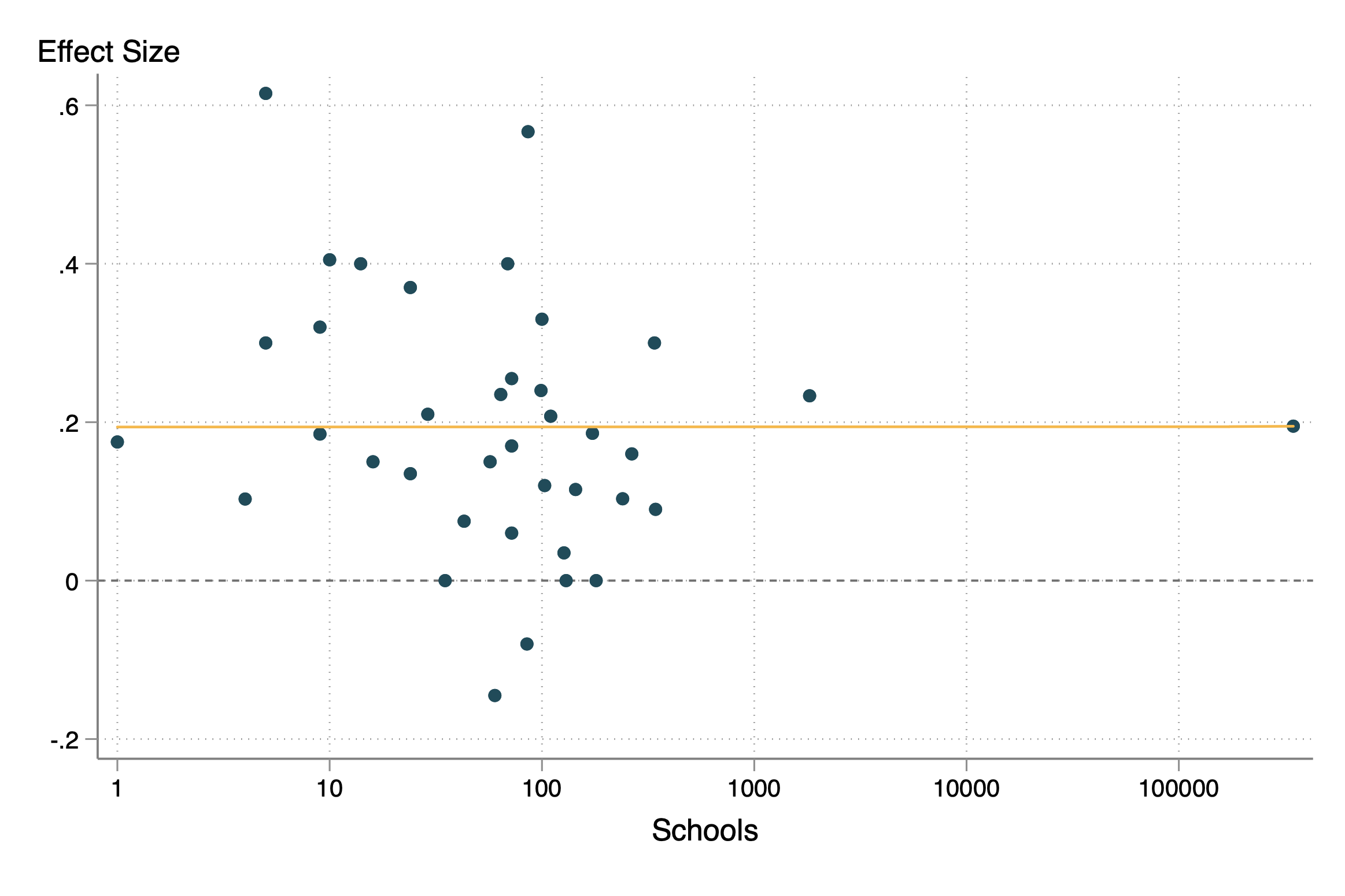

Figure 1: The effect of edtech, by intervention scale

So, does edtech scale? Maybe.

The scatterplot of scale (schools) and effect sizes produces a remarkably flat regression line, but has only two “at-scale” studies (Figure 1). Both studies involved broadcasting expert teachers via satellite internet from central locations to rural schools. One is a study of "the largest ICT project in the world to date”, which served over 100 million students in 346,206 rural primary and middle schools (Bianchi et al 2020). The other is from Karnataka state, where expert teachers delivered lectures from a studio in India’s technology hub Bangalore. In Karnataka the lectures were accompanied by multimedia content, and a 10 minute video or phone link for questions at the end of lectures (Naik et al 2020). The China study is also particularly notable for not just its stunning scale, but also for going beyond short-term test scores and looking at long-run outcomes including increases in earnings.

An additional working paper released after Rodriguez-Segura’s review looks at a similar program in Mexico—the “telesecundaria” secondary schools that deliver lectures by satellite TV, which have been operating for over 50 years and serve around 17 percent of all secondary students, with positive effects on test scores (Borghesan & Vasey 2021).

So, what works at scale seems to be broadcasting lectures to schools—a 50-year old innovation. But the jury is still out on the more recent wave of innovation in personalised adaptive instruction softwares.